Generate structured data by using the AI.GENERATE_TABLE function

This document shows you how to generate structured data using a Gemini model, and then format the model's response using a SQL schema.

You do this by completing the following tasks:

- Creating a BigQuery ML remote model over any of the generally available or preview Gemini models.

- Using the model with the

AI.GENERATE_TABLEfunction to generate structured data based on data from standard tables.

Required roles

To create a remote model and use the AI.GENERATE_TABLE function, you need the

following Identity and Access Management (IAM) roles:

- Create and use BigQuery datasets, tables, and models:

BigQuery Data Editor (

roles/bigquery.dataEditor) on your project. Create, delegate, and use BigQuery connections: BigQuery Connections Admin (

roles/bigquery.connectionsAdmin) on your project.If you don't have a default connection configured, you can create and set one as part of running the

CREATE MODELstatement. To do so, you must have BigQuery Admin (roles/bigquery.admin) on your project. For more information, see Configure the default connection.Grant permissions to the connection's service account: Project IAM Admin (

roles/resourcemanager.projectIamAdmin) on the project that contains the Vertex AI endpoint. This is the current project for remote models that you create by specifying the model name as an endpoint. This is the project identified in the URL for remote models that you create by specifying a URL as an endpoint.Create BigQuery jobs: BigQuery Job User (

roles/bigquery.jobUser) on your project.

These predefined roles contain the permissions required to perform the tasks in this document. To see the exact permissions that are required, expand the Required permissions section:

Required permissions

- Create a dataset:

bigquery.datasets.create - Create, delegate, and use a connection:

bigquery.connections.* - Set service account permissions:

resourcemanager.projects.getIamPolicyandresourcemanager.projects.setIamPolicy - Create a model and run inference:

bigquery.jobs.createbigquery.models.createbigquery.models.getDatabigquery.models.updateDatabigquery.models.updateMetadata

- Query table data:

bigquery.tables.getData

You might also be able to get these permissions with custom roles or other predefined roles.

Before you begin

-

In the Google Cloud console, on the project selector page, select or create a Google Cloud project.

Roles required to select or create a project

- Select a project: Selecting a project doesn't require a specific IAM role—you can select any project that you've been granted a role on.

-

Create a project: To create a project, you need the Project Creator

(

roles/resourcemanager.projectCreator), which contains theresourcemanager.projects.createpermission. Learn how to grant roles.

-

Verify that billing is enabled for your Google Cloud project.

-

Enable the BigQuery, BigQuery Connection, and Vertex AI APIs.

Roles required to enable APIs

To enable APIs, you need the Service Usage Admin IAM role (

roles/serviceusage.serviceUsageAdmin), which contains theserviceusage.services.enablepermission. Learn how to grant roles.

Create a dataset

Create a BigQuery dataset to contain your resources:

Console

In the Google Cloud console, go to the BigQuery page.

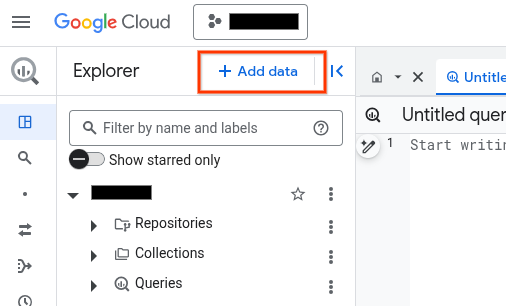

In the left pane, click Explorer:

If you don't see the left pane, click Expand left pane to open the pane.

In the Explorer pane, click your project name.

Click View actions > Create dataset.

On the Create dataset page, do the following:

For Dataset ID, type a name for the dataset.

For Location type, select Region or Multi-region.

- If you selected Region, then select a location from the Region list.

- If you selected Multi-region, then select US or Europe from the Multi-region list.

Click Create dataset.

bq

Create a connection

You can skip this step if you either have a default connection configured, or you have the BigQuery Admin role.

Create a Cloud resource connection for the remote model to use, and get the connection's service account. Create the connection in the same location as the dataset that you created in the previous step.

Select one of the following options:

Console

Go to the BigQuery page.

In the Explorer pane, click Add data:

The Add data dialog opens.

In the Filter By pane, in the Data Source Type section, select Business Applications.

Alternatively, in the Search for data sources field, you can enter

Vertex AI.In the Featured data sources section, click Vertex AI.

Click the Vertex AI Models: BigQuery Federation solution card.

In the Connection type list, select Vertex AI remote models, remote functions, BigLake and Spanner (Cloud Resource).

In the Connection ID field, enter a name for your connection.

Click Create connection.

Click Go to connection.

In the Connection info pane, copy the service account ID for use in a later step.

bq

In a command-line environment, create a connection:

bq mk --connection --location=REGION --project_id=PROJECT_ID \ --connection_type=CLOUD_RESOURCE CONNECTION_ID

The

--project_idparameter overrides the default project.Replace the following:

REGION: your connection regionPROJECT_ID: your Google Cloud project IDCONNECTION_ID: an ID for your connection

When you create a connection resource, BigQuery creates a unique system service account and associates it with the connection.

Troubleshooting: If you get the following connection error, update the Google Cloud SDK:

Flags parsing error: flag --connection_type=CLOUD_RESOURCE: value should be one of...

Retrieve and copy the service account ID for use in a later step:

bq show --connection PROJECT_ID.REGION.CONNECTION_ID

The output is similar to the following:

name properties 1234.REGION.CONNECTION_ID {"serviceAccountId": "connection-1234-9u56h9@gcp-sa-bigquery-condel.iam.gserviceaccount.com"}

Terraform

Use the

google_bigquery_connection

resource.

To authenticate to BigQuery, set up Application Default Credentials. For more information, see Set up authentication for client libraries.

The following example creates a Cloud resource connection named

my_cloud_resource_connection in the US region:

To apply your Terraform configuration in a Google Cloud project, complete the steps in the following sections.

Prepare Cloud Shell

- Launch Cloud Shell.

-

Set the default Google Cloud project where you want to apply your Terraform configurations.

You only need to run this command once per project, and you can run it in any directory.

export GOOGLE_CLOUD_PROJECT=PROJECT_ID

Environment variables are overridden if you set explicit values in the Terraform configuration file.

Prepare the directory

Each Terraform configuration file must have its own directory (also called a root module).

-

In Cloud Shell, create a directory and a new

file within that directory. The filename must have the

.tfextension—for examplemain.tf. In this tutorial, the file is referred to asmain.tf.mkdir DIRECTORY && cd DIRECTORY && touch main.tf

-

If you are following a tutorial, you can copy the sample code in each section or step.

Copy the sample code into the newly created

main.tf.Optionally, copy the code from GitHub. This is recommended when the Terraform snippet is part of an end-to-end solution.

- Review and modify the sample parameters to apply to your environment.

- Save your changes.

-

Initialize Terraform. You only need to do this once per directory.

terraform init

Optionally, to use the latest Google provider version, include the

-upgradeoption:terraform init -upgrade

Apply the changes

-

Review the configuration and verify that the resources that Terraform is going to create or

update match your expectations:

terraform plan

Make corrections to the configuration as necessary.

-

Apply the Terraform configuration by running the following command and entering

yesat the prompt:terraform apply

Wait until Terraform displays the "Apply complete!" message.

- Open your Google Cloud project to view the results. In the Google Cloud console, navigate to your resources in the UI to make sure that Terraform has created or updated them.

Give the service account access

Grant the connection's service account the Vertex AI User role.

If you plan to specify the endpoint as a URL when you create the remote model—

for example endpoint = 'https://us-central1-aiplatform.googleapis.com/v1/projects/myproject/locations/us-central1/publishers/google/models/gemini-2.5-flash'—

grant this role in the same project you specify in the URL.

If you plan to specify the endpoint by using the model name when you create

the remote model, for example endpoint = 'gemini-2.5-flash', grant this role

in the same project where you plan to create the remote model.

Granting the role in a different project results in the error

bqcx-1234567890-wxyz@gcp-sa-bigquery-condel.iam.gserviceaccount.com does not have the permission to access resource.

To grant the role, follow these steps:

Console

Go to the IAM & Admin page.

Click Add.

The Add principals dialog opens.

In the New principals field, enter the service account ID that you copied earlier.

In the Select a role field, select Vertex AI, and then select Vertex AI User.

Click Save.

gcloud

Use the

gcloud projects add-iam-policy-binding command.

gcloud projects add-iam-policy-binding 'PROJECT_NUMBER' --member='serviceAccount:MEMBER' --role='roles/aiplatform.user' --condition=None

Replace the following:

PROJECT_NUMBER: your project numberMEMBER: the service account ID that you copied earlier

Create a BigQuery ML remote model

In the Google Cloud console, go to the BigQuery page.

Using the SQL editor, create a remote model:

CREATE OR REPLACE MODEL `PROJECT_ID.DATASET_ID.MODEL_NAME` REMOTE WITH CONNECTION {DEFAULT | `PROJECT_ID.REGION.CONNECTION_ID`} OPTIONS (ENDPOINT = 'ENDPOINT');

Replace the following:

PROJECT_ID: your project IDDATASET_ID: the ID of the dataset to contain the model. This dataset must be in the same location as the connection that you are usingMODEL_NAME: the name of the modelREGION: the region used by the connectionCONNECTION_ID: the ID of your BigQuery connectionWhen you view the connection details in the Google Cloud console, this is the value in the last section of the fully qualified connection ID that is shown in Connection ID, for example

projects/myproject/locations/connection_location/connections/myconnectionENDPOINT: the name of the Gemini model to use. For supported Gemini models, you can specify the global endpoint to improve availability. For more information, seeENDPOINT.

Generate structured data

Generate structured data by using the

AI.GENERATE_TABLE function

with a remote model, and using prompt data from a

table column:

SELECT * FROM AI.GENERATE_TABLE( MODEL `PROJECT_ID.DATASET_ID.MODEL_NAME`, [TABLE `PROJECT_ID.DATASET_ID.TABLE_NAME` / (PROMPT_QUERY)], STRUCT(TOKENS AS max_output_tokens, TEMPERATURE AS temperature, TOP_P AS top_p, STOP_SEQUENCES AS stop_sequences, SAFETY_SETTINGS AS safety_settings, OUTPUT_SCHEMA AS output_schema) );

Replace the following:

PROJECT_ID: your project ID.DATASET_ID: the ID of the dataset that contains the model.MODEL_NAME: the name of the model.TABLE_NAME: the name of the table that contains the prompt. This table must have a column that's namedprompt, or you can use an alias to use a differently named column.PROMPT_QUERY: the GoogleSQL query that generates the prompt data. The prompt value itself can be pulled from a column, or you can specify it as a struct value with an arbitrary number of string and column name subfields. For example,SELECT ('Analyze the sentiment in ', feedback_column, 'using the following categories: positive, negative, neutral') AS prompt.TOKENS: anINT64value that sets the maximum number of tokens that can be generated in the response. This value must be in the range[1,8192]. Specify a lower value for shorter responses and a higher value for longer responses. The default is128.TEMPERATURE: aFLOAT64value in the range[0.0,2.0]that controls the degree of randomness in token selection. The default is1.0.Lower values for

temperatureare good for prompts that require a more deterministic and less open-ended or creative response, while higher values fortemperaturecan lead to more diverse or creative results. A value of0fortemperatureis deterministic, meaning that the highest probability response is always selected.TOP_P: aFLOAT64value in the range[0.0,1.0]helps determine the probability of the tokens selected. Specify a lower value for less random responses and a higher value for more random responses. The default is0.95.STOP_SEQUENCES: anARRAY<STRING>value that removes the specified strings if they are included in responses from the model. Strings are matched exactly, including capitalization. The default is an empty array.SAFETY_SETTINGS: anARRAY<STRUCT<STRING AS category, STRING AS threshold>>value that configures content safety thresholds to filter responses. The first element in the struct specifies a harm category, and the second element in the struct specifies a corresponding blocking threshold. The model filters out content that violate these settings. You can only specify each category once. For example, you can't specify bothSTRUCT('HARM_CATEGORY_DANGEROUS_CONTENT' AS category, 'BLOCK_MEDIUM_AND_ABOVE' AS threshold)andSTRUCT('HARM_CATEGORY_DANGEROUS_CONTENT' AS category, 'BLOCK_ONLY_HIGH' AS threshold). If there is no safety setting for a given category, theBLOCK_MEDIUM_AND_ABOVEsafety setting is used.Supported categories are as follows:

HARM_CATEGORY_HATE_SPEECHHARM_CATEGORY_DANGEROUS_CONTENTHARM_CATEGORY_HARASSMENTHARM_CATEGORY_SEXUALLY_EXPLICIT

Supported thresholds are as follows:

BLOCK_NONE(Restricted)BLOCK_LOW_AND_ABOVEBLOCK_MEDIUM_AND_ABOVE(Default)BLOCK_ONLY_HIGHHARM_BLOCK_THRESHOLD_UNSPECIFIED

For more information, see Harm categories and How to configure content filters.

OUTPUT_SCHEMA: aSTRINGvalue that specifies the format for the model's response. Theoutput_schemavalue must be a SQL schema definition, similar to that used in theCREATE TABLEstatement. The following data types are supported:INT64FLOAT64BOOLSTRINGARRAYSTRUCT

When using the

output_schemaargument to generate structured data based on prompts from a table, it is important to understand the prompt data in order to specify an appropriate schema.For example, say you are analyzing movie review content from a table that has the following fields:

- movie_id

- review

- prompt

Then you might create prompt text by running a query similar to the following:

UPDATE

mydataset.movie_reviewSET prompt = CONCAT('Extract the key words and key sentiment from the text below: ', review) WHERE review IS NOT NULL;And you might specify a

output_schemavalue similar to"keywords ARRAY<STRING>, sentiment STRING" AS output_schema.

Examples

The following example shows a request that takes prompt data from a table and provides a SQL schema to format the model's response:

SELECT * FROM AI.GENERATE_TABLE( MODEL `mydataset.gemini_model`, TABLE `mydataset.mytable`, STRUCT("keywords ARRAY<STRING>, sentiment STRING" AS output_schema));

The following example shows a request that takes prompt data from a query and provides a SQL schema to format the model's response:

SELECT * FROM AI.GENERATE_TABLE( MODEL `mydataset.gemini_model`, ( SELECT 'John Smith is a 20-year old single man living at 1234 NW 45th St, Kirkland WA, 98033. He has two phone numbers 123-123-1234, and 234-234-2345. He is 200.5 pounds.' AS prompt ), STRUCT("address STRUCT<street_address STRING, city STRING, state STRING, zip_code STRING>, age INT64, is_married BOOL, name STRING, phone_number ARRAY<STRING>, weight_in_pounds FLOAT64" AS output_schema, 8192 AS max_output_tokens));