Halaman ini menunjukkan cara mengaktifkan Cloud Trace di agen Anda dan melihat rekaman aktivitas untuk menganalisis waktu respons kueri dan operasi yang dijalankan.

Trace

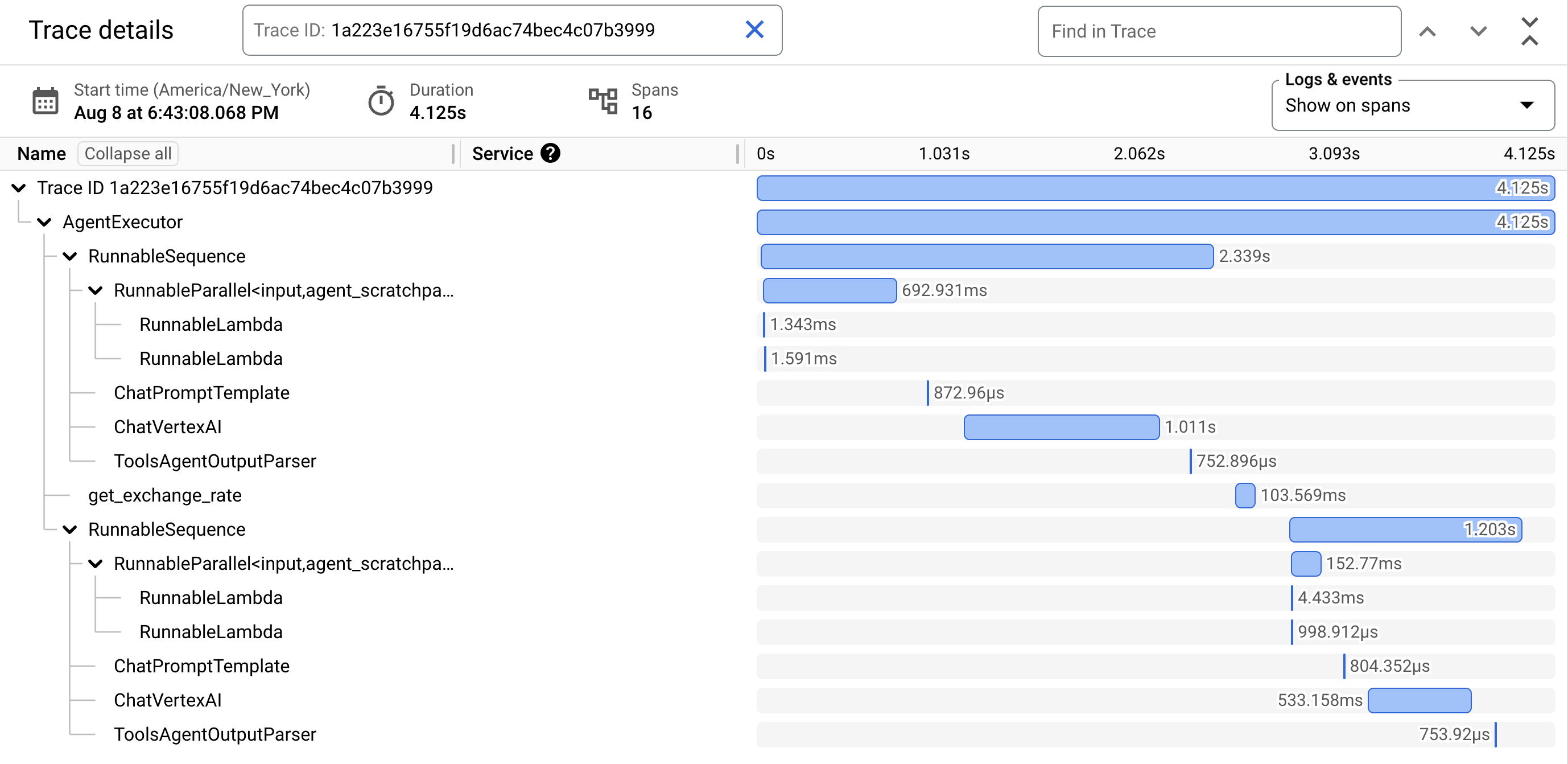

adalah linimasa permintaan saat agen Anda merespons setiap kueri. Misalnya, diagram Gantt berikut menunjukkan contoh rekaman aktivitas dari LangchainAgent:

Baris pertama dalam diagram Gantt adalah untuk rekaman aktivitas. Rekaman aktivitas terdiri dari span individual, yang merepresentasikan satu unit tugas, seperti panggilan fungsi atau interaksi dengan LLM, dengan span pertama merepresentasikan keseluruhan permintaan. Setiap rentang memberikan detail tentang operasi tertentu, seperti nama operasi, waktu mulai dan berakhir, serta atribut yang relevan, dalam permintaan. Misalnya, JSON berikut menunjukkan rentang tunggal yang merepresentasikan panggilan ke model bahasa besar (LLM):

{

"name": "llm",

"context": {

"trace_id": "ed7b336d-e71a-46f0-a334-5f2e87cb6cfc",

"span_id": "ad67332a-38bd-428e-9f62-538ba2fa90d4"

},

"span_kind": "LLM",

"parent_id": "f89ebb7c-10f6-4bf8-8a74-57324d2556ef",

"start_time": "2023-09-07T12:54:47.597121-06:00",

"end_time": "2023-09-07T12:54:49.321811-06:00",

"status_code": "OK",

"status_message": "",

"attributes": {

"llm.input_messages": [

{

"message.role": "system",

"message.content": "You are an expert Q&A system that is trusted around the world.\nAlways answer the query using the provided context information, and not prior knowledge.\nSome rules to follow:\n1. Never directly reference the given context in your answer.\n2. Avoid statements like 'Based on the context, ...' or 'The context information ...' or anything along those lines."

},

{

"message.role": "user",

"message.content": "Hello?"

}

],

"output.value": "assistant: Yes I am here",

"output.mime_type": "text/plain"

},

"events": [],

}

Untuk mengetahui detailnya, lihat dokumentasi Cloud Trace tentang Trace dan rentang dan Konteks trace.

Menulis rekaman aktivitas untuk agen

Untuk menulis rekaman aktivitas agen:

ADK

Untuk mengaktifkan pelacakan untuk AdkApp, tentukan enable_tracing=True saat Anda

mengembangkan agen Agent Development Kit.

Contoh:

from vertexai.agent_engines import AdkApp

from google.adk.agents import Agent

agent = Agent(

model=model,

name=agent_name,

tools=[get_exchange_rate],

)

app = AdkApp(

agent=agent, # Required.

enable_tracing=True, # Optional.

)

LangchainAgent

Untuk mengaktifkan tracing untuk LangchainAgent, tentukan enable_tracing=True saat Anda

mengembangkan agen LangChain.

Contoh:

from vertexai.agent_engines import LangchainAgent

agent = LangchainAgent(

model=model, # Required.

tools=[get_exchange_rate], # Optional.

enable_tracing=True, # [New] Optional.

)

LanggraphAgent

Untuk mengaktifkan pelacakan untuk LanggraphAgent, tentukan enable_tracing=True saat Anda

mengembangkan agen LangGraph.

Contoh:

from vertexai.agent_engines import LanggraphAgent

agent = LanggraphAgent(

model=model, # Required.

tools=[get_exchange_rate], # Optional.

enable_tracing=True, # [New] Optional.

)

LlamaIndex

Untuk mengaktifkan pelacakan untuk LlamaIndexQueryPipelineAgent, tentukan enable_tracing=True saat Anda

mengembangkan agen LlamaIndex.

Contoh:

from vertexai.preview import reasoning_engines

def runnable_with_tools_builder(model, runnable_kwargs=None, **kwargs):

from llama_index.core.query_pipeline import QueryPipeline

from llama_index.core.tools import FunctionTool

from llama_index.core.agent import ReActAgent

llama_index_tools = []

for tool in runnable_kwargs.get("tools"):

llama_index_tools.append(FunctionTool.from_defaults(tool))

agent = ReActAgent.from_tools(llama_index_tools, llm=model, verbose=True)

return QueryPipeline(modules = {"agent": agent})

agent = reasoning_engines.LlamaIndexQueryPipelineAgent(

model="gemini-2.0-flash",

runnable_kwargs={"tools": [get_exchange_rate]},

runnable_builder=runnable_with_tools_builder,

enable_tracing=True, # Optional

)

Kustom

Untuk mengaktifkan pelacakan untuk agen kustom, buka Pelacakan menggunakan OpenTelemetry untuk mengetahui detailnya.

Tindakan ini akan mengekspor rekaman aktivitas ke Cloud Trace di project dalam Siapkan project Google Cloud .

Melihat rekaman aktivitas untuk agen

Anda dapat melihat rekaman aktivitas menggunakan Trace Explorer:

Untuk mendapatkan izin guna melihat data rekaman aktivitas di konsol Google Cloud atau memilih cakupan rekaman aktivitas, minta administrator untuk memberi Anda peran IAM Cloud Trace User (

roles/cloudtrace.user) di project Anda.Buka Trace Explorer di konsol Google Cloud :

Pilih Google Cloud project Anda (sesuai dengan

PROJECT_ID) di bagian atas halaman.

Untuk mempelajari lebih lanjut, lihat dokumentasi Cloud Trace.

Kuota dan batas

Beberapa nilai atribut mungkin dipotong saat mencapai batas kuota. Untuk mengetahui informasi selengkapnya, lihat Kuota Cloud Trace.

Harga

Cloud Trace memiliki paket gratis. Untuk mengetahui informasi selengkapnya, lihat Harga Cloud Trace.