File storage, also known as network-attached storage (NAS), provides file-level access to applications to read and update information that can be shared across multiple machines. Some on-premises file storage solutions have a scale-up architecture and simply add storage to a fixed amount of compute resources. Other file storage solutions have a scale-out architecture where capacity and compute (performance) can be incrementally added to an existing file system as needed. In both storage architectures, one or multiple virtual machines (VMs) can access the storage.

Although some file systems use a native POSIX client, many storage systems use a protocol that enables client machines to mount a file system and access the files as if they were hosted locally. The most common protocols for exporting file shares are Network File System (NFS) for Linux (and in some cases Windows) and Server Message Block (SMB) for Windows.

This document describes the following options for sharing files:

- Google Cloud Hyperdisk, Persistent Disk, or Local SSD

- Managed solutions:

- Partner solutions in Google Cloud Marketplace:

An underlying factor in the performance and predictability of all of the Google Cloud services is the network stack that Google evolved over many years. With the Jupiter Fabric, Google built a robust, scalable, and stable networking stack that can continue to evolve without affecting your workloads. As Google improves and bolsters its network abilities internally, your file-sharing solution benefits from the added performance.

One feature of Google Cloud that can help you get the most out of your investment is the ability to specify Custom VM types. When choosing the size of your filer, you can pick exactly the right mix of memory and CPU, so that your filer is operating at optimal performance without being oversubscribed.

Note that Cloud Storage is also a great way to store petabytes or exabytes of data with high levels of redundancy at a low cost, but Cloud Storage has a different performance profile and API than the file servers discussed here.

Summary of file-server solutions

The following table summarizes the file-server solutions and features:

| Solution | Optimal dataset | Throughput | Managed support | Export protocols |

|---|---|---|---|---|

| Filestore Basic | 1 TiB to 64 TiB | Up to 1.2 GiB/s | Fully managed by Google | NFSv3 |

| Filestore Zonal | 1 TiB to 100 TiB | Up to 26 GiB/s | Fully managed by Google | NFSv3, NFSv4.1 |

| Filestore Regional | 1 TiB to 100 TiB | Up to 26 GiB/s | Fully managed by Google | NFSv3, NFSv4.1 |

| Managed Lustre | 18 TiB to 8 PiB | Up to 1 TB/s | Fully managed by Google | POSIX |

| NetApp Volumes | 1 GiB to 1 PiB | 1 MB/s to 30 GiB/s | Fully managed by Google | NFSv3, NFSv4.1, SMB3 |

| Read-only Persistent Disk | < 64 TB | 240 to 1,200 MBps | No | Direct attachment |

Durable disks and Local SSD

If you have data that only needs to be accessed by a single VM or doesn't change over time, you can avoid a file server altogether by using the durable disks offered by Compute Engine—Hyperdisk or Persistent Disk. You can format Hyperdisk and Persistent Disk short volumes with a file system such as Ext4 or XFS and attach them to VMs in either read-write or read-only mode. This means that you can first attach a volume to an instance, load it with the data you need, and then attach it as a read-only disk to hundreds of VMs simultaneously. Employing read-only disks does not work for all use cases, but it can greatly reduce complexity, compared to using a file server.

Durable disks deliver consistent performance. All Persistent Disk volumes of the same size (and for SSD Persistent Disk, the same number of vCPUs) that you attach to your instance have the same performance characteristics. You don't need to pre-warm or test your disks before using them in production.

The cost of persistent disks is simple to determine because there are no I/O costs to consider after provisioning your volume. Persistent disks can also be resized when required. This lets you start with a low-cost and low-capacity volume, and you need not create additional instances or disks to scale your capacity.

If total storage capacity is the main requirement, you can use low-cost standard persistent disks. For the best performance while continuing to be durable, you can use SSD persistent disks.

Furthermore, it's important that you choose the correct Compute Engine persistent disk capacity and number of vCPUs to ensure that your file server's storage devices receive the required storage bandwidth, IOPS, and network bandwidth. The network bandwidth for VMs depends on the machine type that you choose. For example, A4 VMs have a maximum network bandwidth of up to 3,600 Gbps. For more information, see Machine families resource and comparison guide. For information about tuning persistent disks, see About Persistent Disk performance.

If your data is ephemeral and requires sub-millisecond latency and high I/O operations per second (IOPS), you can take advantage of up to 9 TB of Local SSDs for extreme performance. Local SSDs provide GB/s of bandwidth and millions of IOPS, all while not using up your instances' allotted network bandwidth. It is important to remember though that Local SSDs have certain trade-offs in availability, durability, and flexibility.

For more information about the storage options for Compute Engine, see Design an optimal storage strategy for your cloud workload.

Considerations when choosing a file storage solution

Choosing a file storage solution requires you to make tradeoffs regarding manageability, cost, performance, and scalability. Making the decision is easier if you have a well-defined workload, which isn't often the case. Where workloads evolve over time or are highly variant, it's prudent to trade cost savings for flexibility and elasticity, so you can grow into your solution. On the other hand, if you have a temporal and well-known workload, you can create a purpose-built file storage architecture that you can tear down and rebuild to meet your immediate storage needs.

One of the first decisions to make is whether you want to pay for a managed storage service, a solution that includes product support, or an unsupported solution.

- Managed file storage services are the easiest to operate, because either Google or a partner is handling all operations. These services might even provide a service level agreement (SLA) for availability like most other Google Cloud services.

- Unmanaged, yet supported, solutions provide additional flexibility. Partners can help with any issues, but the day-to-day operation of the storage solution is left to the user.

- Unsupported solutions require the most effort to deploy and maintain, leaving all issues to the user. These solutions are not covered in this document.

Your next decision involves determining the solution's durability and availability requirements. Most file solutions are zonal solutions and don't provide protection by default if the zone fails. So it's important to consider if a disaster recovery (DR) solution that protects against zonal failures is required. It's also important to understand the application requirements for durability and availability. For example, the choice of local SSDs or persistent disks in your deployment has a big impact, as does the configuration of the file solution software. Each solution requires careful planning to achieve high durability, availability, and even protection against zonal and regional failures.

Finally, consider the locations (that is, zones, regions, on-premises data centers) where you need to access the data. The locations of the compute farms that access your data influence your choice of filer solution because only some solutions allow hybrid on-premises and in-cloud access.

Managed file storage solutions

This section describes the Google-managed solutions for file storage.

Filestore Basic

Filestore Basic instances are suitable for file sharing, software development, and GKE workloads. You can choose either HDD or SSD for storing data. SSD provides better performance. With either option, capacity scales up incrementally, and you can protect the data by using backups.

Filestore Zonal

Filestore Zonal simplifies enterprise storage and data management on Google Cloud and across hybrid clouds. Filestore Zonal delivers cost-effective, high-performance parallel access to global data while maintaining strict consistency powered by a dynamically scalable, distributed file system. With Filestore Zonal, existing NFS applications and NAS workflows can run in the cloud without requiring refactoring, yet retain the benefits of enterprise data services (for example, snapshots and backups). The Filestore CSI driver allows seamless data persistence, portability, and sharing for containerized workloads.

You can scale Filestore Zonal instances on demand. This lets you create and expand file system infrastructure when required, ensuring that storage performance and capacity always align with your dynamic workflow requirements. As a Filestore Zonal cluster expands, both metadata and I/O performance scale linearly. This scaling lets you enhance and accelerate a broad range of data-intensive workflows, including high performance computing, analytics, cross-site data aggregation, DevOps, and many more. As a result, Filestore Zonal is a great fit for use in data-centric industries such as life sciences (for example, genome sequencing), financial services, and media and entertainment.

To further protect critical data, Filestore Zonal also lets you take and keep periodic snapshots, create backups, and replicate to another region. With Filestore, you can recover an individual file or an entire file system in less than 10 minutes from any of the prior recovery points.

Filestore Regional

Filestore Regional

is a fully managed cloud-native NFS solution that lets you deploy critical file-

based applications in Google Cloud, backed by an SLA that delivers

99.99% regional availability. With a 99.99% regional-availability SLA,

Filestore Regional is designed for applications that demand

high availability. With a few mouse clicks (or a few gcloud commands or API

calls), you can provision NFS shares that are synchronously replicated across

three zones within a region. If any zone within the region becomes unavailable,

Filestore Regional continues to transparently serve data to the

application with no operational intervention.

To further protect critical data, Filestore Regional also lets you take and keep periodic snapshots, create backups, and replicate to another region. With Filestore, you can recover an individual file or an entire file system in less than 10 minutes from any of the prior recovery points.

To further protect critical data, Filestore also lets you take and keep periodic snapshots of the file system. With Filestore, you can recover an individual file or an entire file system in less than 10 minutes from any of the prior recovery points.

For critical applications like SAP, both the database and application tiers need to be highly available. To satisfy this requirement, you can deploy the SAP database tier to Google Cloud Hyperdisk Extreme, in multiple zones using built-in database high availability. Similarly, the NetWeaver application tier, which requires shared executables across many VMs, can be deployed to Filestore Regional, which replicates the Netweaver data across multiple zones within a region. The end result is a highly available three-tier mission-critical application architecture.

IT organizations are also increasingly deploying stateful applications in containers on Google Kubernetes Engine (GKE). This often causes them to rethink which storage infrastructure to use to support those applications. You can use block storage (Hyperdisk or Persistent Disk), file storage (Filestore Basic, Zonal, or Regional), or object storage (Cloud Storage). Filestore Basic HDD for GKE and Filestore multishares for GKE combined with the Filestore CSI driver lets organizations that require multiple GKE Pods have shared file access, providing an increased level of availability for mission-critical workloads.

Managed Lustre

Managed Lustre is a Google-managed service that provides high-throughput and low-latency storage for tightly coupled HPC workloads. It significantly accelerates HPC workloads and AI training and inference by providing high-throughput, low-latency access to massive datasets. For information about using Managed Lustre for AI and ML workloads, see Design storage for AI and ML workloads in Google Cloud. Managed Lustre distributes data across multiple storage nodes, which enables concurrent access by many VMs. This parallel access eliminates bottlenecks that occur with conventional file systems and it enables workloads to rapidly ingest and process the vast amounts of data required.

NetApp Volumes

NetApp Volumes is a fully managed Google service that lets you quickly mount shared file storage to your Google Cloud compute instances. NetApp Volumes supports SMB, NFS, and multi-protocol access. NetApp Volumes delivers high performance to your applications at low latency, with robust data-protection capabilities: snapshots, copies, cross-region replication, and backup. The service is suitable for applications requiring both sequential and random workloads, which can scale across hundreds or thousands of Compute Engine instances. In seconds, volumes that range in size from GiBs to a PiB can be provisioned and protected with robust data protection capabilities. With multiple service levels (Flex, Standard, Premium, and Extreme), NetApp Volumes delivers the appropriate performance for your workload, without affecting availability.

Partner solutions in Cloud Marketplace

The following partner-provided solutions are available in Cloud Marketplace.

NetApp Cloud Volumes ONTAP

NetApp Cloud Volumes ONTAP (NetApp CVO) is a customer-managed, cloud-based solution that brings the full feature set of ONTAP, NetApp's leading data management operating system, to Google Cloud. NetApp CVO is deployed within your VPC, with billing and support from Google. The ONTAP software runs on a Compute Engine VM, and uses a combination of persistent disks and Cloud Storage buckets (if tiering is enabled) to store the NAS data. The built-in filer accommodates the NAS volumes using thin provisioning so that you pay only for the storage you use. As the data grows, additional persistent disks are added to the aggregate capacity pool.

NetApp CVO abstracts the underlying infrastructure and let you create virtual data volumes carved out of the aggregate pool that are consistent with all other ONTAP volumes on any cloud or on-premises environment. The data volumes you create support all versions of NFS, SMB, multi-protocol NFS/SMB, and iSCSI. They support a broad range of file-based workloads, including web and rich media content, used across many industries such as electronic design automation (EDA) and media and entertainment.

NetApp CVO supports instant, space-saving point-in-time snapshots, built-in block-level, incremental forever backup to Cloud Storage and cross-region asynchronous replication for disaster recovery. The option to select the type of Compute Engine instance and persistent disks lets you achieve the performance you want for your workloads. Even when operating in a high-performance configuration, NetApp CVO implements storage efficiencies such as deduplication, compaction, and compressions as well as auto-tiering infrequently-used data to the Cloud Storage bucket enabling you to store petabytes of data while significantly reducing overall storage costs.

DDN Infinia

If you need advanced AI data orchestration, you can use DDN Infinia, which is available in Google Cloud Marketplace. Infinia provides an AI-focused data intelligence solution that's optimized for inference, training, and real-time analytics. It enables ultra-fast data ingestion, metadata-rich indexing, and seamless integration with AI frameworks like TensorFlow and PyTorch.

The following are the key features of DDN Infinia:

- High performance: Delivers sub-millisecond latency and multiple TB/s throughput.

- Scalability: Supports scaling from terabytes to exabytes and can accommodate up to 100,000+ GPUs and one million simultaneous clients in a single deployment.

- Multi-tenancy with predictable quality of service (QoS): Offers secure, isolated environments for multiple tenants with predictable QoS for consistent performance across workloads.

- Unified data access: Enables seamless integration with existing applications and workflows through built-in multi-protocol support, including for Amazon S3-compatible, CSI, and Cinder.

- Advanced security: Features built-in encryption, fault-domain-aware erasure coding, and snapshots that help to ensure data protection and compliance.

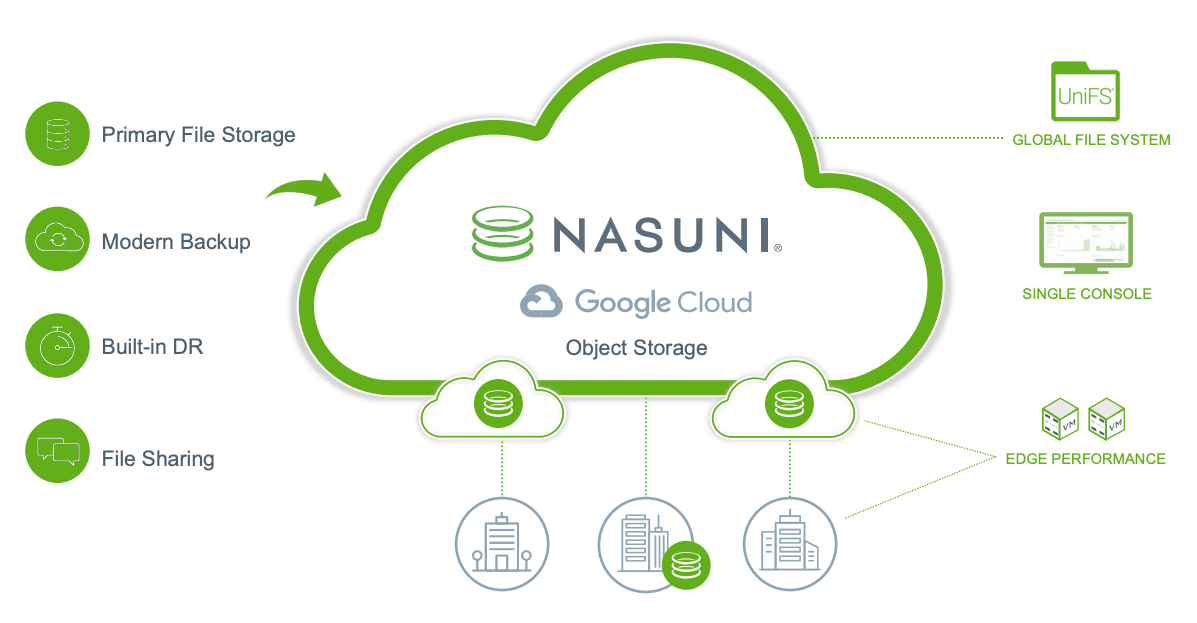

Nasuni Cloud File Storage

Nasuni replaces enterprise file servers and NAS devices and all associated infrastructures, including backup and DR hardware, with a simpler, low-cost cloud alternative. Nasuni uses Google Cloud object storage to deliver a more efficient software-as-a-service (SaaS) storage solution that scales to handle rapid, unstructured file data growth. Nasuni is designed to handle department, project, and organizational file shares and application workflows for every employee, wherever they work.

Nasuni offers three packages, with pricing for companies and organizations of all sizes so they can grow and expand as needed.

Its benefits include the following:

Cloud-based primary file storage for up to 70% less. Nasuni's architecture takes advantage of built-in object lifecycle management policies. These policies allow complete flexibility for use with Cloud Storage classes, including Standard, Nearline, Coldline, and Archive. By using the immediate-access Archive class for primary storage with Nasuni, you can realize cost savings of up to 70%.

Departmental and organizational file shares in the cloud. Nasuni's cloud-based architecture offers a single global namespace across Google Cloud regions, with no limits on the number of files, file sizes, or snapshots, letting you store files directly from your desktop into Google Cloud through standard NAS (SMB) drive-mapping protocols.

Built-in backup and disaster recovery. Nasuni's "set-it and forget-it" operations make it simple to manage global file storage. Backup and DR is included, and a single management console lets you oversee and control the environment anywhere, anytime.

Replaces aging file servers. Nasuni makes it simple to migrate Microsoft Windows file servers and other existing file storage systems to Google Cloud, reducing costs and management complexity of these environments.

For more information, see the following:

- Nasuni guided tour

- Nasuni and Google Cloud partnership

- Nasuni Enterprise File Storage for Google Cloud solution brief (PDF)

- Nasuni Cloud File Storage in Cloud Marketplace

- Nasuni and Google Cloud blog

Sycomp Intelligent Data Storage Platform

Sycomp Intelligent Data Storage Platform, which is available in Google Cloud Marketplace, lets you run your high performance computing (HPC), AI and ML, and big data workloads in Google Cloud. With Sycomp Storage you can concurrently access data from thousands of VMs, reduce costs by automatically managing tiers of storage, and run your application on-premises or in Google Cloud. Sycomp Storage can be deployed quickly and it supports access to your data through NFS and the IBM Storage Scale client.

IBM Storage Scale is a parallel file system that helps to securely manage large volumes (PBs) of data. Sycomp Storage Scale is a parallel file system that's well suited for HPC, AI, ML, big data, and other applications that require a POSIX-compliant shared file system. With adaptable storage capacity and performance scaling, Sycomp Storage can support small to large HPC, AI, and ML workloads.

After you deploy a cluster in Google Cloud, you decide how you want to use it. Choose whether you want to use the cluster only in the cloud or in hybrid mode by connecting to existing on-premises IBM Storage Scale clusters, third-party NFS NAS solutions, or other object-based storage solutions.

Contributors

Author: Sean Derrington | Group Product Manager, Storage

Other contributors:

- Dean Hildebrand | Technical Director, Office of the CTO

- Kumar Dhanagopal | Cross-Product Solution Developer