Create a data pipeline

This quickstart shows you how to do the following:

- Create a Cloud Data Fusion instance.

- Deploy a sample pipeline that's provided with your Cloud Data Fusion

instance. The pipeline does the following:

- Reads a JSON file containing NYT bestseller data from Cloud Storage.

- Runs transformations on the file to parse and clean the data.

- Loads the top-rated books added in the last week that cost less than $25 into BigQuery.

Before you begin

- Sign in to your Google Cloud account. If you're new to Google Cloud, create an account to evaluate how our products perform in real-world scenarios. New customers also get $300 in free credits to run, test, and deploy workloads.

-

In the Google Cloud console, on the project selector page, select or create a Google Cloud project.

Roles required to select or create a project

- Select a project: Selecting a project doesn't require a specific IAM role—you can select any project that you've been granted a role on.

-

Create a project: To create a project, you need the Project Creator

(

roles/resourcemanager.projectCreator), which contains theresourcemanager.projects.createpermission. Learn how to grant roles.

-

Enable the Cloud Data Fusion API.

Roles required to enable APIs

To enable APIs, you need the Service Usage Admin IAM role (

roles/serviceusage.serviceUsageAdmin), which contains theserviceusage.services.enablepermission. Learn how to grant roles. -

In the Google Cloud console, on the project selector page, select or create a Google Cloud project.

Roles required to select or create a project

- Select a project: Selecting a project doesn't require a specific IAM role—you can select any project that you've been granted a role on.

-

Create a project: To create a project, you need the Project Creator

(

roles/resourcemanager.projectCreator), which contains theresourcemanager.projects.createpermission. Learn how to grant roles.

-

Enable the Cloud Data Fusion API.

Roles required to enable APIs

To enable APIs, you need the Service Usage Admin IAM role (

roles/serviceusage.serviceUsageAdmin), which contains theserviceusage.services.enablepermission. Learn how to grant roles.

Create a Cloud Data Fusion instance

- Click Create an instance.

- Enter an Instance name.

- Enter a Description for your instance.

- Enter the Region in which to create the instance.

- Choose the Cloud Data Fusion Version to use.

- Choose the Cloud Data Fusion Edition.

- For Cloud Data Fusion versions 6.2.3 and later, in the Authorization field, choose the Dataproc service account to use for running your Cloud Data Fusion pipeline in Dataproc. The default value, Compute Engine account, is pre-selected.

- Click Create. It takes up to 30 minutes for the instance creation process to complete. While Cloud Data Fusion creates your instance, a progress wheel displays next to the instance name on the Instances page. After completion, it turns into a green check mark and indicates that you can start using the instance.

Navigate the Cloud Data Fusion web interface

When using Cloud Data Fusion, you use both the Google Cloud console and the separate Cloud Data Fusion web interface.

In the Google Cloud console, you can do the following:

- Create a Google Cloud console project

- Create and delete Cloud Data Fusion instances

- View the Cloud Data Fusion instance details

In the Cloud Data Fusion web interface, you can use various pages, such as Studio or Wrangler, to use Cloud Data Fusion functionality.

To navigate the Cloud Data Fusion interface, follow these steps:

- In the Google Cloud console, open the Instances page.

- In the instance Actions column, click the View Instance link.

- In the Cloud Data Fusion web interface, use the left navigation panel to navigate to the page you need.

Deploy a sample pipeline

Sample pipelines are available through the Cloud Data Fusion Hub, which lets you share reusable Cloud Data Fusion pipelines, plugins, and solutions.

- In the Cloud Data Fusion web interface, click Hub.

- In the left panel, click Pipelines.

- Click the Cloud Data Fusion Quickstart pipeline.

- Click Create.

- In the Cloud Data Fusion Quickstart configuration panel, click Finish.

Click Customize Pipeline.

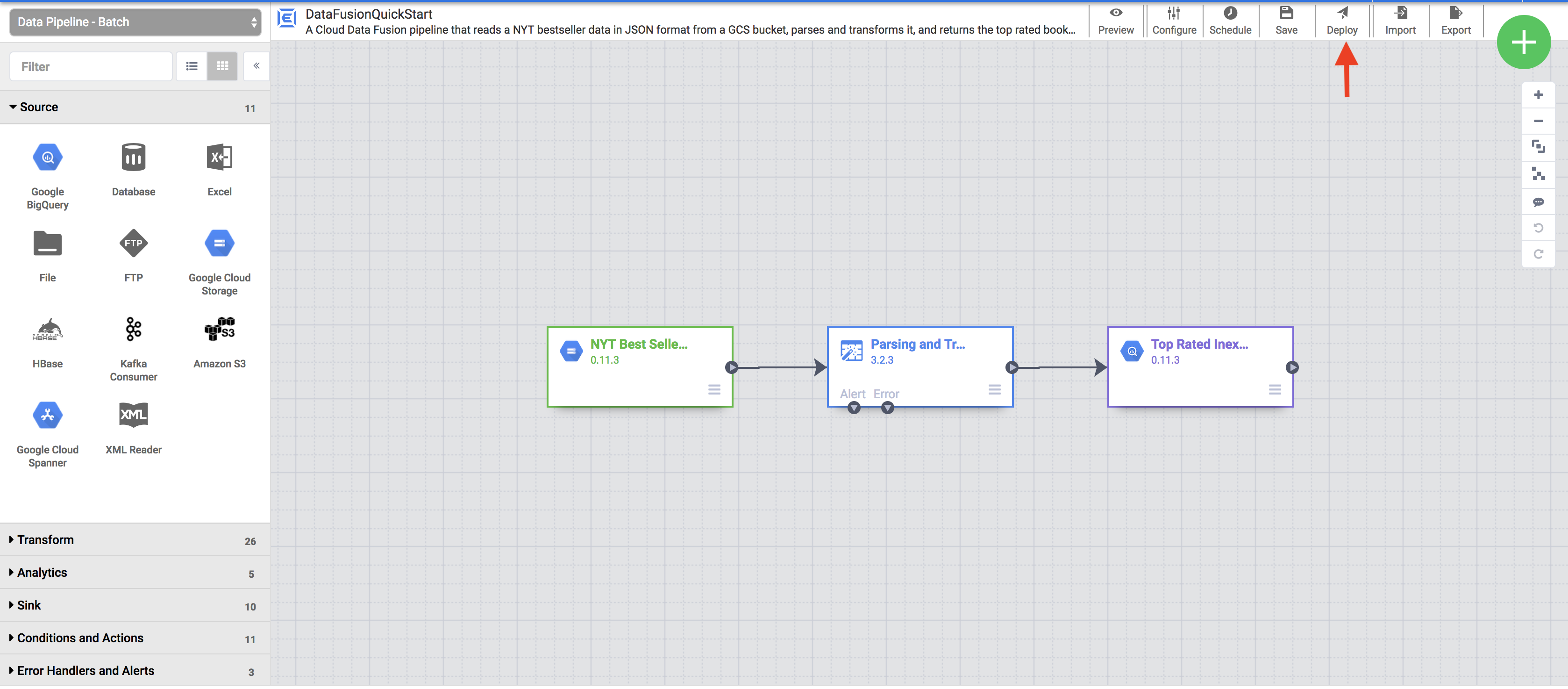

A visual representation of your pipeline appears on the Studio page, which is a graphical interface for developing data integration pipelines. Available pipeline plugins are listed on the left, and your pipeline is displayed on the main canvas area. You can explore your pipeline by holding the pointer over each pipeline node and clicking Properties. The properties menu for each node lets you view the objects and operations associated with the node.

In the top-right menu, click Deploy. This step submits the pipeline to Cloud Data Fusion. You will execute the pipeline in the next section of this quickstart.

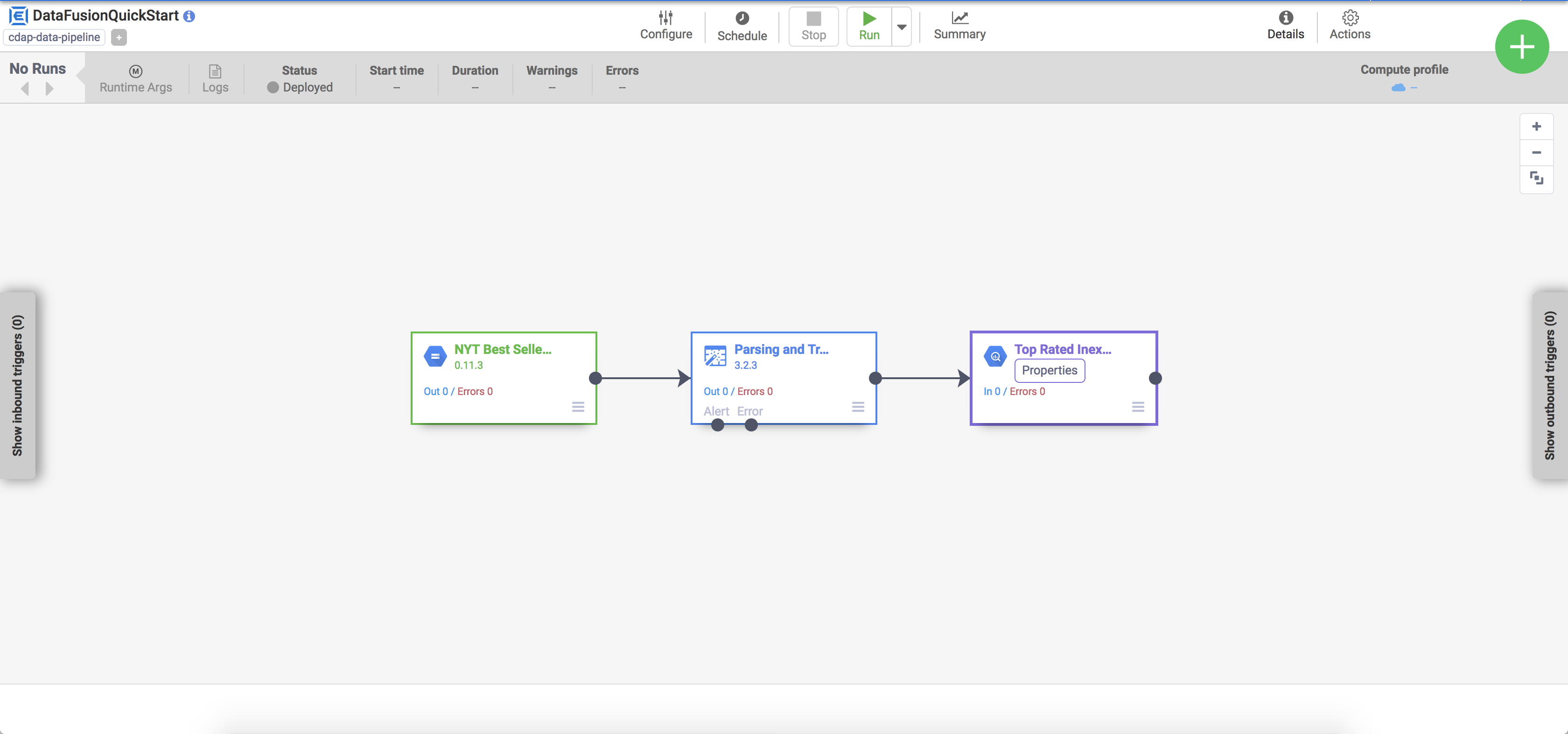

View your pipeline

The deployed pipeline appears in the pipeline details view, where you can do the following:

- View the structure and configuration of the pipeline.

- Run the pipeline manually or set up a schedule or a trigger.

- View a summary of historical runs of the pipeline, including execution times, logs, and metrics.

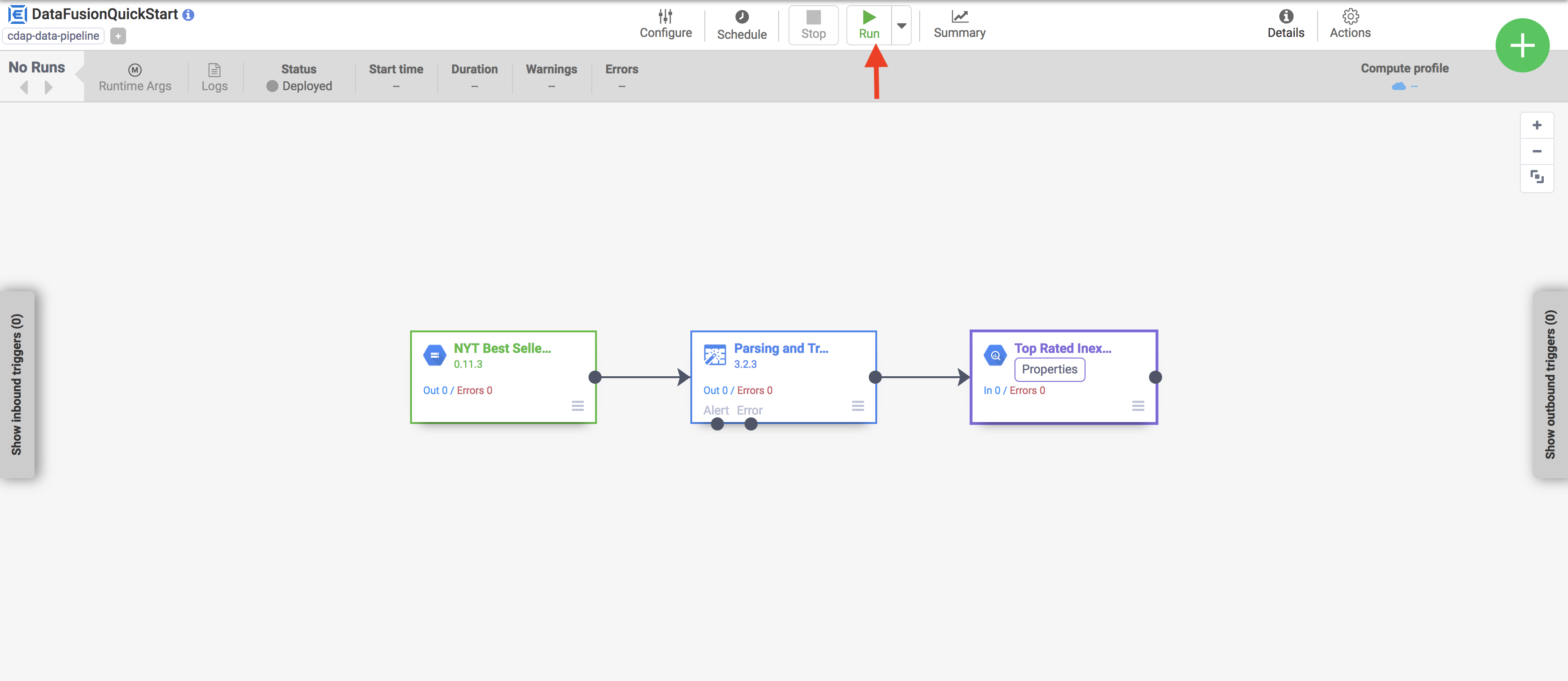

Execute your pipeline

In the pipeline details view, click Run to execute your pipeline.

When executing a pipeline, Cloud Data Fusion does the following:

- Provisions an ephemeral Dataproc cluster

- Executes the pipeline on the cluster using Apache Spark

- Deletes the cluster

View the results

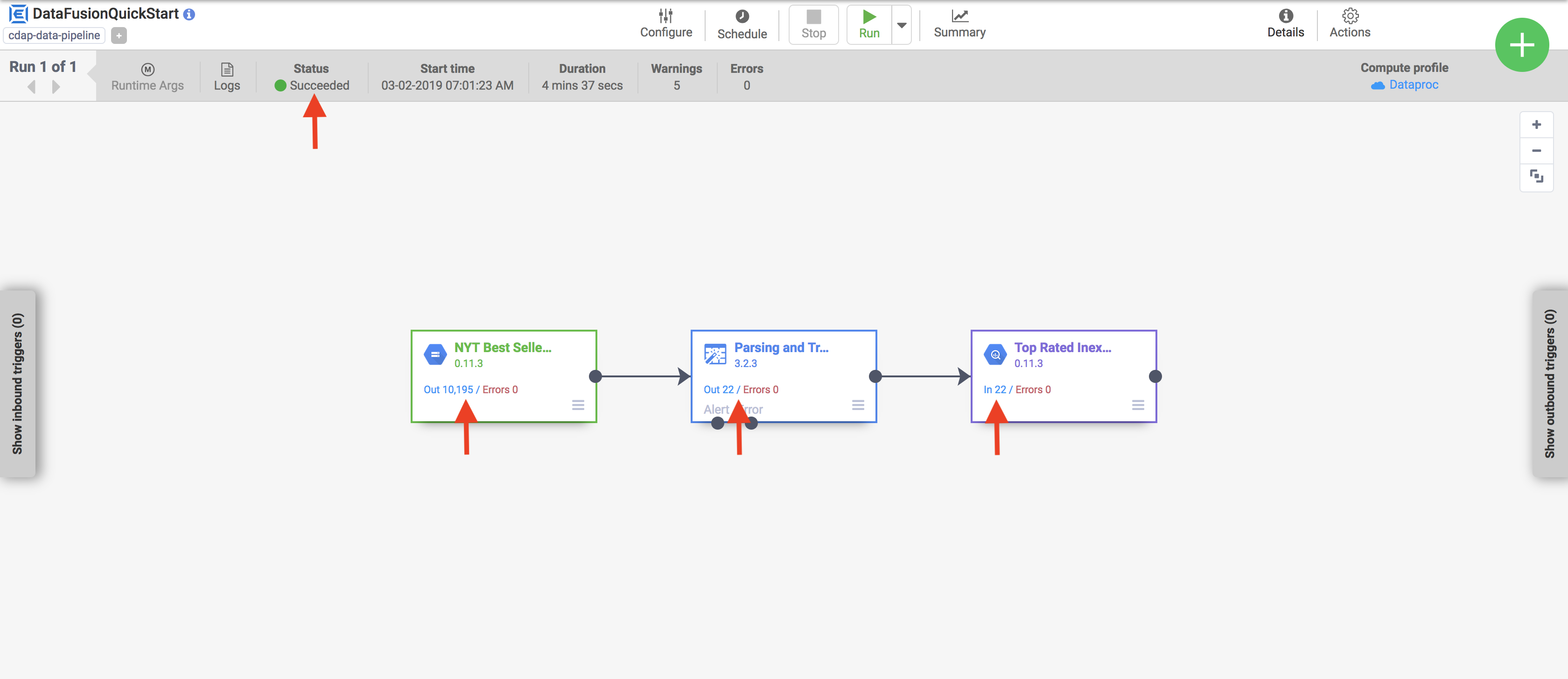

After a few minutes, the pipeline finishes. The pipeline status changes to Succeeded and the number of records processed by each node is displayed.

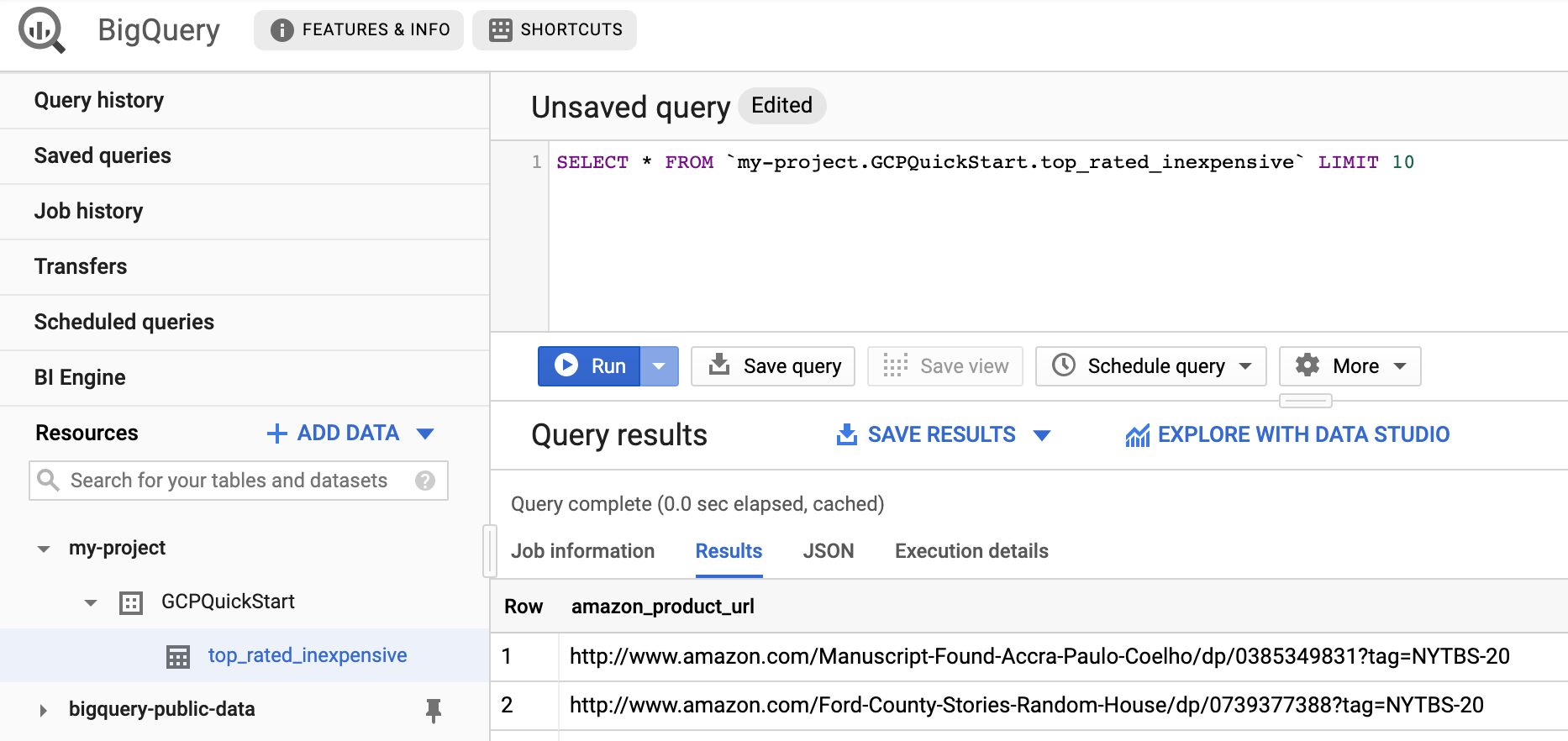

- Go to the BigQuery web interface.

To view a sample of the results, go to the

DataFusionQuickstartdataset in your project, click thetop_rated_inexpensivetable, then run a simple query. For example:SELECT * FROM PROJECT_ID.GCPQuickStart.top_rated_inexpensive LIMIT 10Replace PROJECT_ID with your project ID.

Clean up

To avoid incurring charges to your Google Cloud account for the resources used on this page, follow these steps.

- Delete the BigQuery dataset that your pipeline wrote to in this quickstart.

Optional: Delete the project.

- In the Google Cloud console, go to the Manage resources page.

- In the project list, select the project that you want to delete, and then click Delete.

- In the dialog, type the project ID, and then click Shut down to delete the project.