Untuk pipeline streaming, straggler ditentukan sebagai item kerja dengan karakteristik berikut:

- Hal ini mencegah watermark berkembang dalam jangka waktu yang cukup lama (dalam urutan menit).

- Item ini diproses dalam waktu yang lama dibandingkan dengan item kerja lainnya dalam tahap yang sama.

Tugas yang tertinggal menahan watermark dan menambahkan latensi ke tugas. Jika jeda dapat diterima untuk kasus penggunaan Anda, Anda tidak perlu melakukan tindakan apa pun. Jika Anda ingin mengurangi latensi tugas, mulailah dengan mengatasi tugas yang tertinggal.

Melihat lambatnya streaming di konsol Google Cloud

Setelah memulai tugas Dataflow, Anda dapat menggunakan konsol Google Cloud untuk melihat tugas yang tertunda.

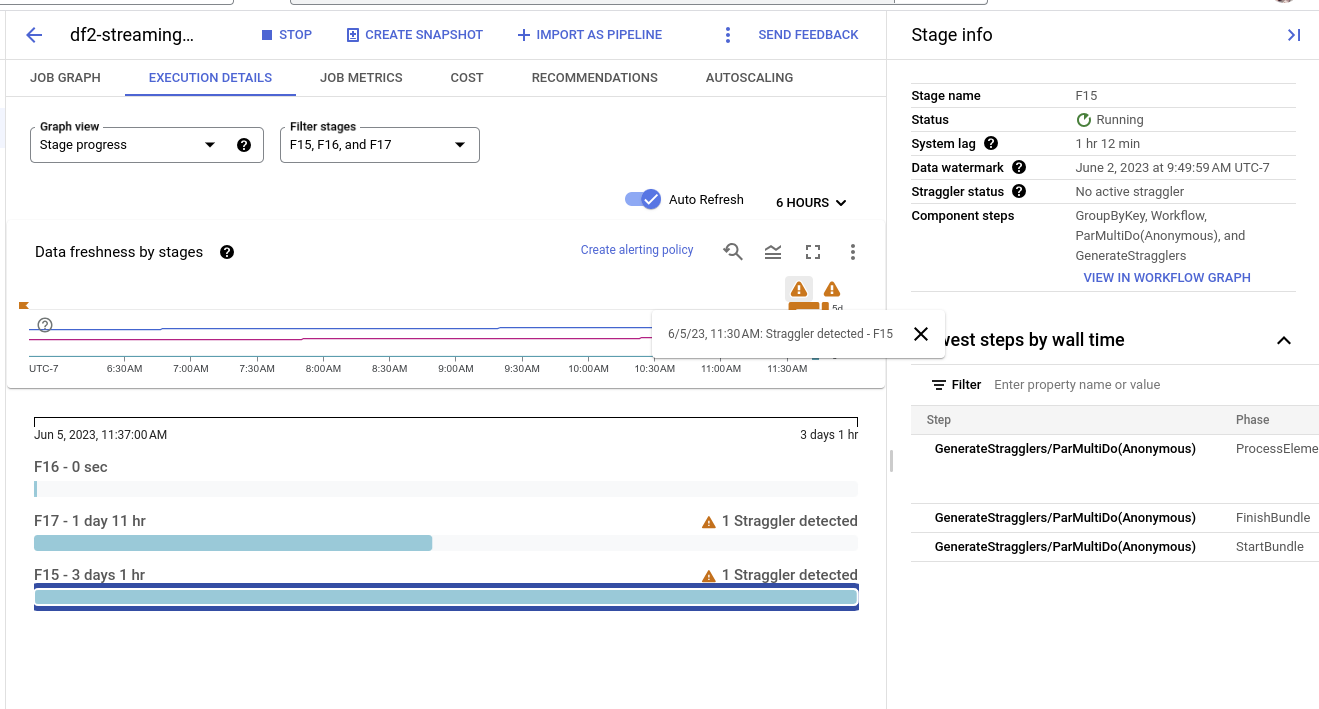

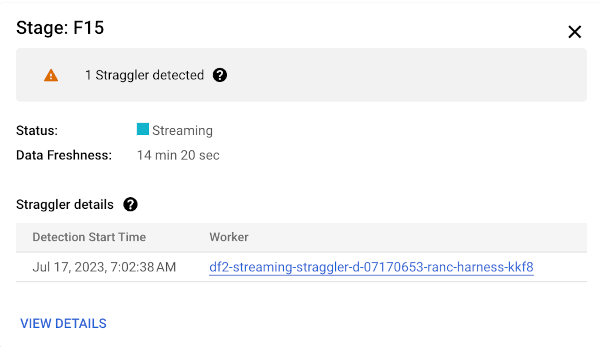

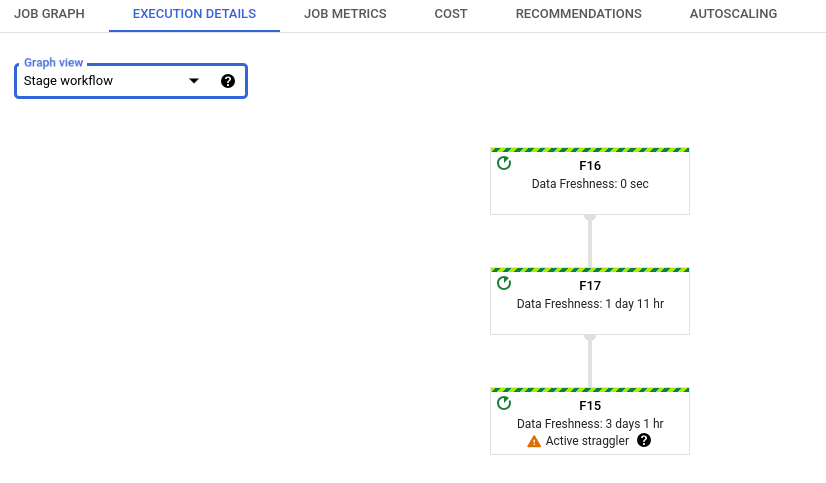

Anda dapat melihat keterlambatan streaming di tampilan progres tahap atau tampilan alur kerja tahap.

Melihat peserta yang tertinggal berdasarkan progres tahap

Untuk melihat peserta yang tertinggal menurut progres tahap:

Di konsol Google Cloud , buka halaman Jobs Dataflow.

Klik nama tugas.

Di halaman Detail tugas, klik tab Detail eksekusi.

Dalam daftar Graph view, pilih Stage progress. Grafik progres menampilkan jumlah gabungan semua tugas yang tertunda yang terdeteksi dalam setiap tahap.

Untuk melihat detail tahap, tahan kursor ke batang untuk tahap tersebut. Panel detail menyertakan link ke log pekerja. Mengklik link ini akan membuka Cloud Logging yang dicakup ke pekerja dan rentang waktu saat keterlambatan terdeteksi.

Melihat alur kerja tahap yang tertinggal

Untuk melihat keterlambatan menurut alur kerja tahap:

Di konsol Google Cloud , buka halaman Jobs Dataflow.

Buka Tugas

Klik nama tugas.

Di halaman detail tugas, klik tab Detail eksekusi.

Dalam daftar Graph view, pilih Stage workflow. Alur kerja tahap menampilkan tahap eksekusi tugas, yang direpresentasikan sebagai grafik alur kerja.

Memecahkan masalah keterlambatan streaming

Jika terdeteksi operasi yang tertunda, berarti ada operasi di pipeline Anda yang telah berjalan terlalu lama.

Untuk memecahkan masalah ini, periksa terlebih dahulu apakah Insight Dataflow menunjukkan adanya masalah.

Jika Anda masih tidak dapat menentukan penyebabnya, periksa log pekerja untuk tahap yang melaporkan keterlambatan. Untuk melihat log pekerja yang relevan, lihat detail keterlambatan dalam progres tahap. Kemudian, klik link untuk pekerja. Link ini akan membuka Cloud Logging, yang dicakup ke pekerja dan rentang waktu saat straggler terdeteksi. Cari masalah yang mungkin memperlambat tahap, seperti:

- Bug dalam kode

DoFnatau macetDoFns. Cari stack trace di log, di dekat stempel waktu saat objek yang tertinggal terdeteksi. - Panggilan ke layanan eksternal yang memerlukan waktu lama untuk diselesaikan. Untuk mengatasi masalah ini, lakukan panggilan batch ke layanan eksternal dan tetapkan waktu tunggu pada RPC.

- Batas kuota di sink. Jika pipeline Anda menghasilkan output ke layanan Google Cloud, Anda mungkin dapat menaikkan kuota. Untuk mengetahui informasi selengkapnya, lihat dokumentasi Kuota Cloud. Selain itu, lihat dokumentasi untuk layanan tertentu terkait strategi pengoptimalan, serta dokumentasi untuk Konektor I/O.

DoFnsyang melakukan operasi baca atau tulis besar pada status persisten. Pertimbangkan untuk memfaktorkan ulang kode Anda untuk melakukan pembacaan atau penulisan yang lebih kecil pada status persisten.

Anda juga dapat menggunakan panel Info samping untuk menemukan langkah-langkah paling lambat dalam tahap. Salah satu langkah ini mungkin menyebabkan keterlambatan. Klik nama langkah untuk melihat log pekerja untuk langkah tersebut.

Setelah Anda menentukan penyebabnya, perbarui pipeline dengan kode baru dan pantau hasilnya.

Langkah berikutnya

- Pelajari cara menggunakan antarmuka pemantauan Dataflow.

- Memahami tab Detail eksekusi di antarmuka pemantauan.