Use the Bigtable change stream to BigQuery template

In this quickstart, you learn how to set up a Bigtable table with a change stream enabled, run a change stream pipeline, make changes to your table, and then see the changes streamed.

Before you begin

-

In the Google Cloud console, on the project selector page, select or create a Google Cloud project.

Roles required to select or create a project

- Select a project: Selecting a project doesn't require a specific IAM role—you can select any project that you've been granted a role on.

-

Create a project: To create a project, you need the Project Creator

(

roles/resourcemanager.projectCreator), which contains theresourcemanager.projects.createpermission. Learn how to grant roles.

-

Verify that billing is enabled for your Google Cloud project.

-

Enable the Dataflow, Cloud Bigtable API, Cloud Bigtable Admin API, and BigQuery APIs.

Roles required to enable APIs

To enable APIs, you need the Service Usage Admin IAM role (

roles/serviceusage.serviceUsageAdmin), which contains theserviceusage.services.enablepermission. Learn how to grant roles. -

In the Google Cloud console, activate Cloud Shell.

Create a BigQuery dataset

Use the Google Cloud console to create a dataset that stores the data.

In the Google Cloud console, go to the BigQuery page.

In the Explorer pane, click your project name.

Expand the Actions option and click Create dataset.

On the Create dataset page, do the following:

- For Dataset ID, enter

bigtable_bigquery_quickstart. - Leave the remaining default settings as they are, and click Create dataset.

- For Dataset ID, enter

Create a table with a change stream enabled

In the Google Cloud console, go to the Bigtable Instances page.

Click the ID of the instance that you are using for this quickstart.

If you don't have an instance available, create an instance with the default configurations in a region near you.

In the left navigation pane, click Tables.

Click Create a table.

Name the table

bigquery-changestream-quickstart.Add a column family named

cf.Select Enable change stream.

Click Create.

On the Bigtable Tables page, find your table

bigquery-changestream-quickstart.In the Change stream column, click Connect.

In the dialog, select BigQuery.

Click Create Dataflow job.

In the provided parameter fields, enter your parameter values. You don't need to provide any optional parameters.

- Set the Bigtable application profile ID to

default. - Set the BigQuery dataset to

bigtable_bigquery_quickstart.

- Set the Bigtable application profile ID to

Click Run job.

Wait until the job status is Starting or Running before proceeding. It takes around 5 minutes once the job is queued.

Keep the job open in a tab, so you can stop the job when cleaning up your resources.

Write some data to Bigtable

In the Cloud Shell, write a few rows to Bigtable so the change log can write some data to BigQuery. As long as you write the data after the job is created, the changes appear. You don't have to wait for the job status to become

running.cbt -instance=BIGTABLE_INSTANCE_ID -project=PROJECT_ID \ set bigquery-changestream-quickstart user123 cf:col1=abc cbt -instance=BIGTABLE_INSTANCE_ID -project=PROJECT_ID \ set bigquery-changestream-quickstart user546 cf:col1=def cbt -instance=BIGTABLE_INSTANCE_ID -project=PROJECT_ID \ set bigquery-changestream-quickstart user789 cf:col1=ghiReplace the following:

- PROJECT_ID: the ID of the project that you are using

- BIGTABLE_INSTANCE_ID: the ID of the instance that contains the

bigquery-changestream-quickstarttable

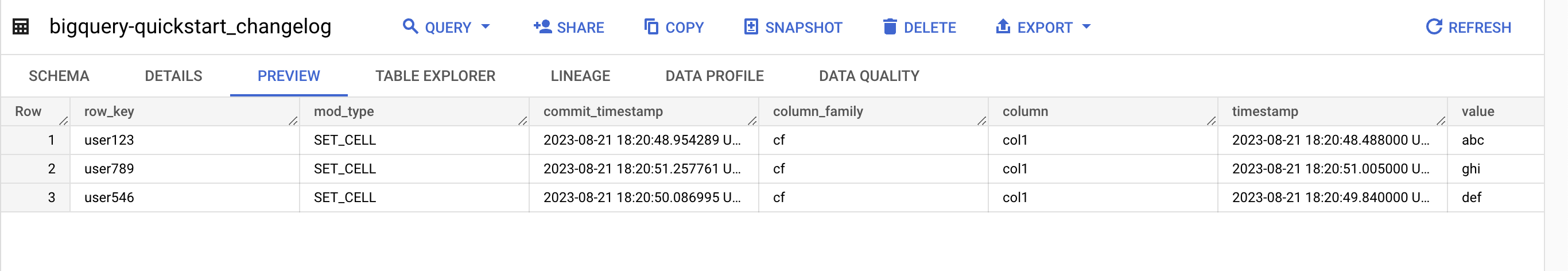

View the change logs in BigQuery

In the Google Cloud console, go to the BigQuery page.

In the Explorer pane, expand your project and the dataset

bigtable_bigquery_quickstart.Click the table

bigquery-changestream-quickstart_changelog.To see the change log, click Preview.

Clean up

To avoid incurring charges to your Google Cloud account for the resources used on this page, follow these steps.

Disable the change stream on the table:

gcloud bigtable instances tables update bigquery-changestream-quickstart \ --project=PROJECT_ID --instance=BIGTABLE_INSTANCE_ID \ --clear-change-stream-retention-periodDelete the table

bigquery-changestream-quickstart:cbt --instance=BIGTABLE_INSTANCE_ID --project=PROJECT_ID deletetable bigquery-changestream-quickstartStop the change stream pipeline:

In the Google Cloud console, go to the Dataflow Jobs page.

Select your streaming job from the job list.

In the navigation, click Stop.

In the Stop job dialog, select Cancel, and then click Stop job.

Delete the BigQuery dataset:

In the Google Cloud console, go to the BigQuery page.

In the Explorer panel, find the dataset

bigtable_bigquery_quickstartand click it.Click Delete, type

delete, and then click Delete to confirm.

Optional: Delete the instance if you created a new one for this quickstart:

cbt deleteinstance BIGTABLE_INSTANCE_ID