Contoh berikut membuat dan menggunakan cluster Dataproc yang diaktifkan untuk Kerberos dengan komponen Ranger dan Solr untuk mengontrol akses pengguna ke resource Hadoop, YARN, dan HIVE.

Catatan:

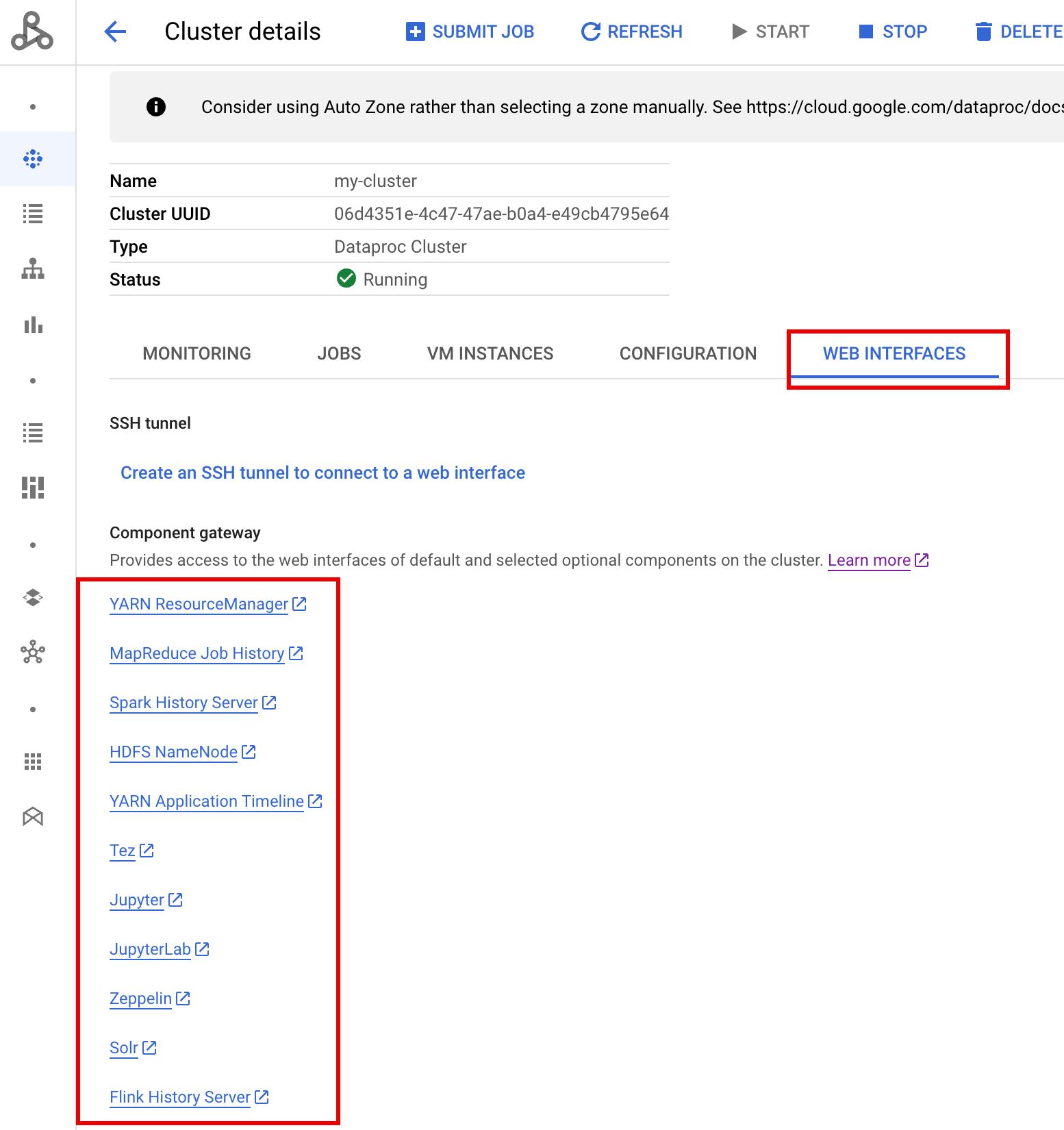

UI Web Ranger dapat diakses melalui Component Gateway.

Di cluster Ranger dengan Kerberos, Dataproc memetakan pengguna Kerberos ke pengguna sistem dengan menghapus realm dan instance pengguna Kerberos. Misalnya, akun utama Kerberos

user1/cluster-m@MY.REALMdipetakan ke sistemuser1, dan kebijakan Ranger ditentukan untuk mengizinkan atau menolak izin untukuser1.

Buat cluster.

- Perintah

gcloudberikut dapat dijalankan di jendela terminal lokal atau dari Cloud Shell project.gcloud dataproc clusters create cluster-name \ --region=region \ --optional-components=SOLR,RANGER \ --enable-component-gateway \ --properties="dataproc:ranger.kms.key.uri=projects/project-id/locations/global/keyRings/keyring/cryptoKeys/key,dataproc:ranger.admin.password.uri=gs://bucket/admin-password.encrypted" \ --kerberos-root-principal-password-uri=gs://bucket/kerberos-root-principal-password.encrypted \ --kerberos-kms-key=projects/project-id/locations/global/keyRings/keyring/cryptoKeys/key

- Perintah

Setelah cluster berjalan, buka halaman Clusters Dataproc di konsol, lalu pilih nama cluster untuk membuka halaman Cluster details. Google Cloud Klik tab Web Interfaces untuk menampilkan daftar link Component Gateway ke antarmuka web komponen default dan opsional yang diinstal di cluster. Klik link Ranger.

Login ke Ranger dengan memasukkan nama pengguna "admin" dan sandi admin Ranger.

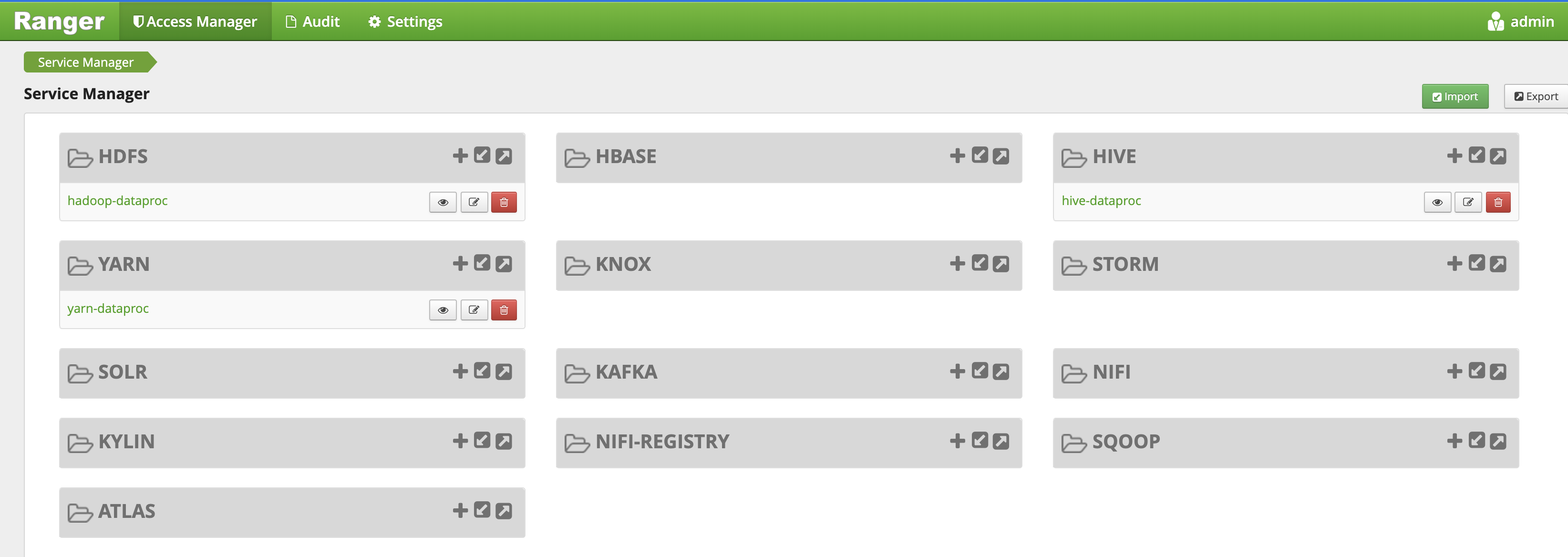

UI admin Ranger akan terbuka di browser lokal.

Kebijakan akses YARN

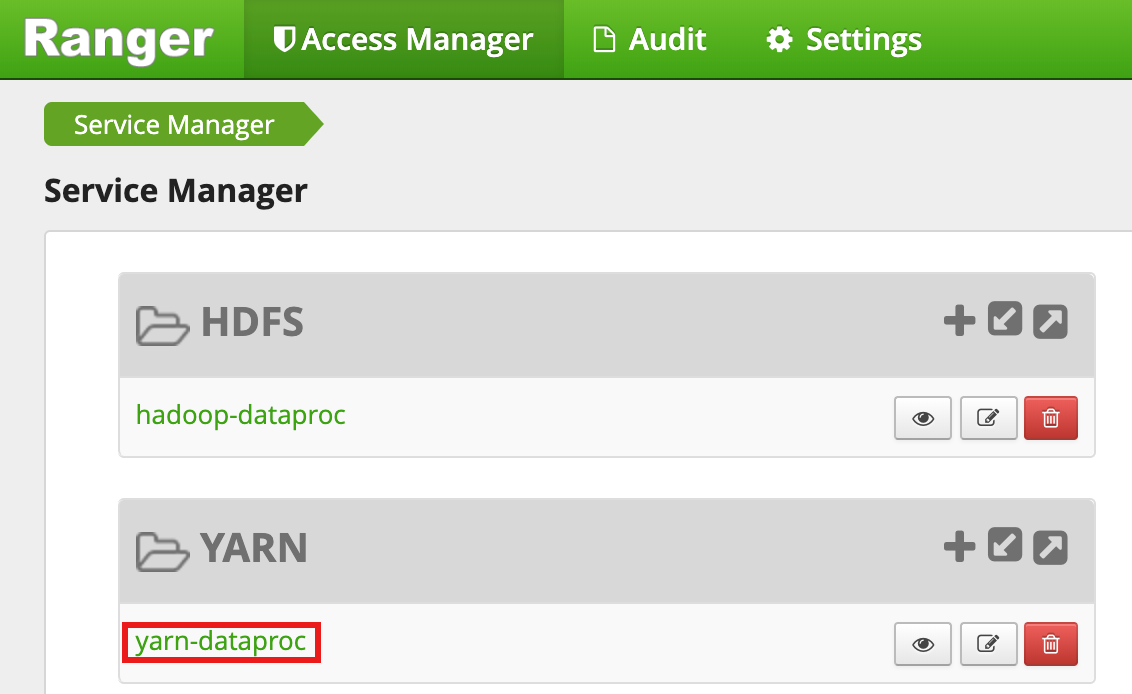

Contoh ini membuat kebijakan Ranger untuk mengizinkan dan menolak akses pengguna ke antrean root.default YARN.

Pilih

yarn-dataprocdari UI Admin Ranger.

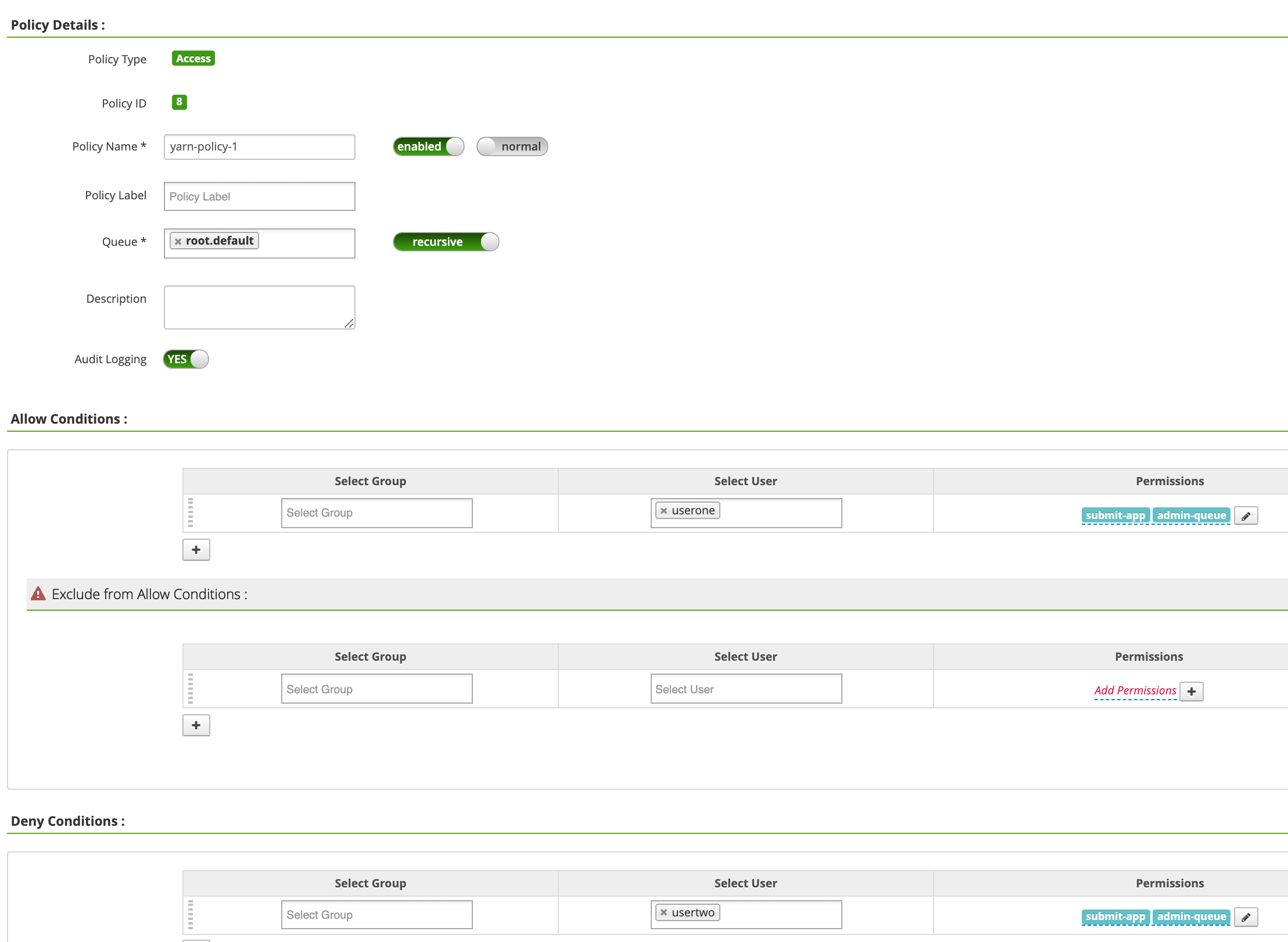

Di halaman yarn-dataproc Policies, klik Add New Policy. Di halaman Create Policy, kolom berikut diisi atau dipilih:

Policy Name: "yarn-policy-1"Queue: "root.default"Audit Logging: "Yes" (Ya)Allow Conditions:Select User: "userone"Permissions: "Pilih Semua" untuk memberikan semua izin

Deny Conditions:Select User: "usertwo"Permissions: "Pilih Semua" untuk menolak semua izin

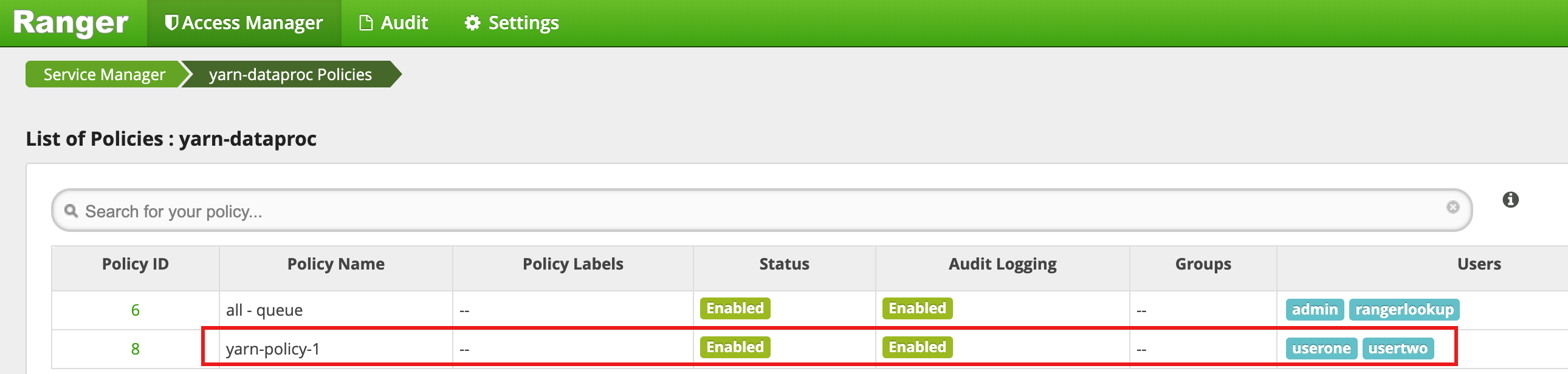

Klik Tambahkan untuk menyimpan kebijakan. Kebijakan ini tercantum di halaman yarn-dataproc Policies:

Jalankan tugas mapreduce Hadoop di jendela sesi SSH master sebagai userone:

userone@example-cluster-m:~$ hadoop jar /usr/lib/hadoop-mapreduce/hadoop-mapreduced-examples. jar pi 5 10

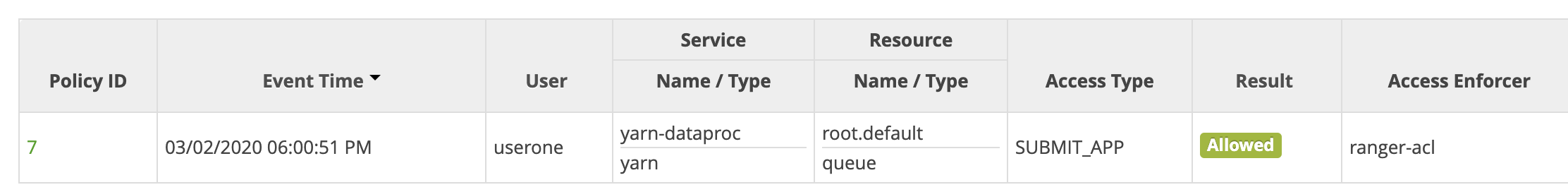

- UI Ranger menunjukkan bahwa

useronediizinkan untuk mengirimkan tugas.

- UI Ranger menunjukkan bahwa

Jalankan tugas mapreduce Hadoop dari jendela sesi SSH master VM sebagai

usertwo:usertwo@example-cluster-m:~$ hadoop jar /usr/lib/hadoop-mapreduce/hadoop-mapreduced-examples. jar pi 5 10

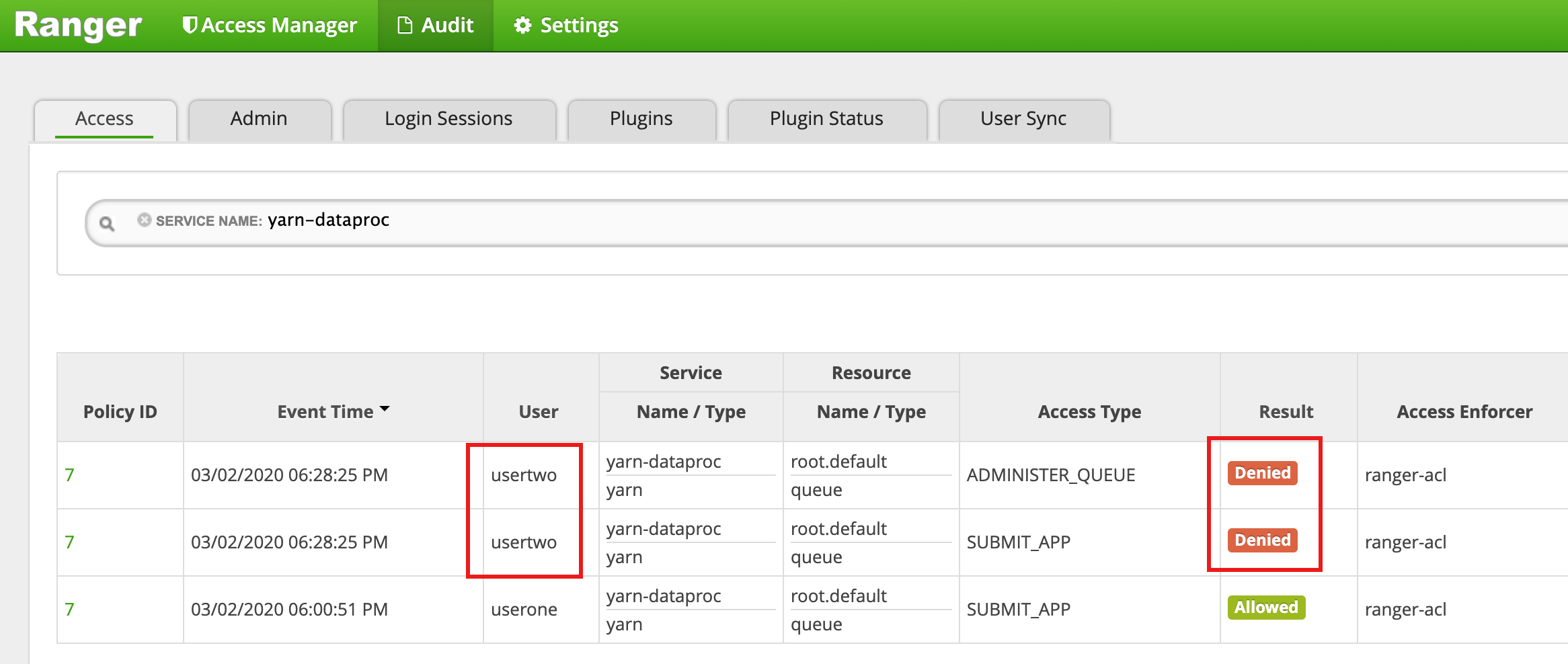

- UI Ranger menunjukkan bahwa

usertwoditolak aksesnya untuk mengirimkan tugas.

- UI Ranger menunjukkan bahwa

Kebijakan akses HDFS

Contoh ini membuat kebijakan Ranger untuk mengizinkan dan menolak akses pengguna ke

direktori /tmp HDFS.

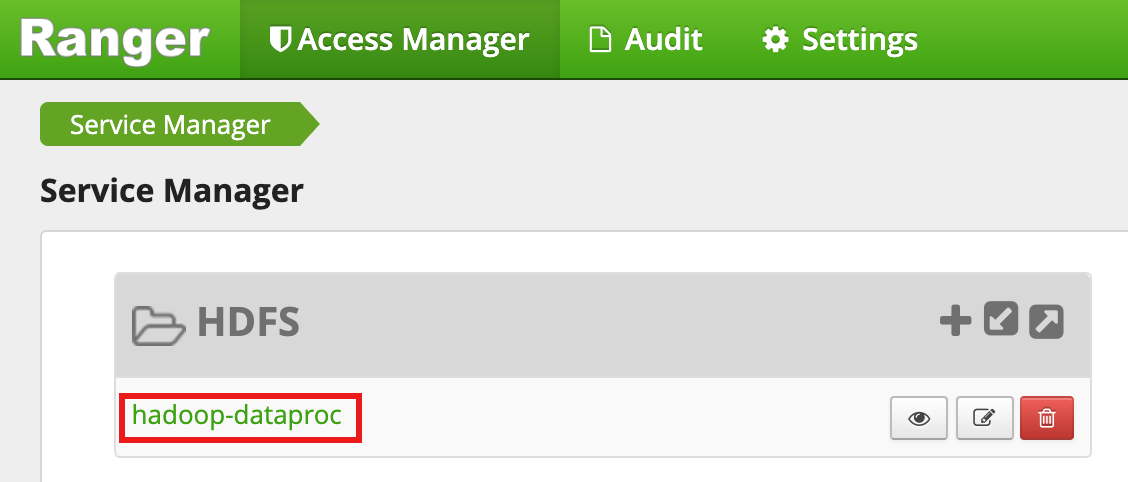

Pilih

hadoop-dataprocdari UI Admin Ranger.

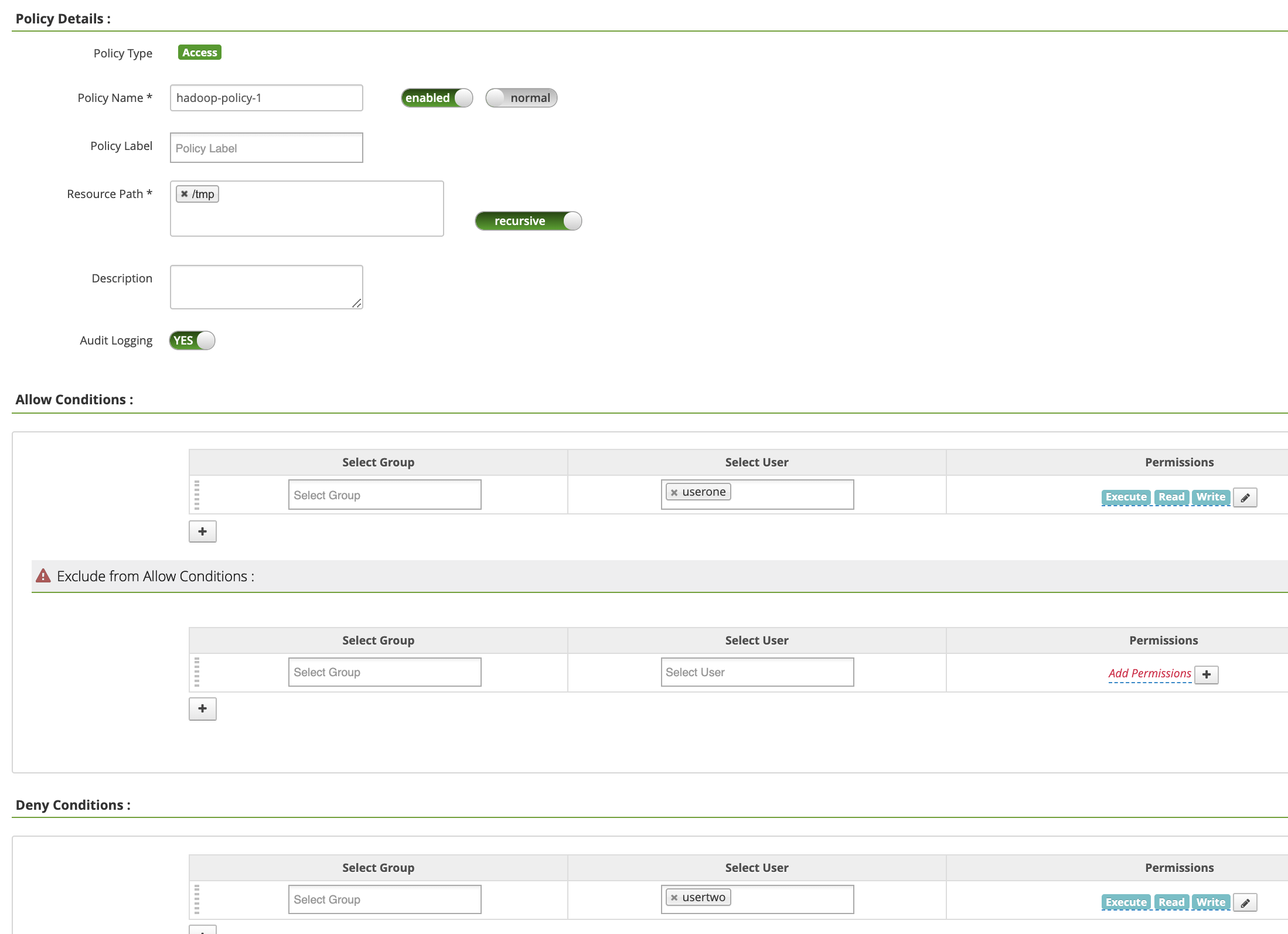

Di halaman hadoop-dataproc Policies, klik Add New Policy. Di halaman Create Policy, kolom berikut diisi atau dipilih:

Policy Name: "hadoop-policy-1"Resource Path: "/tmp"Audit Logging: "Yes" (Ya)Allow Conditions:Select User: "userone"Permissions: "Pilih Semua" untuk memberikan semua izin

Deny Conditions:Select User: "usertwo"Permissions: "Pilih Semua" untuk menolak semua izin

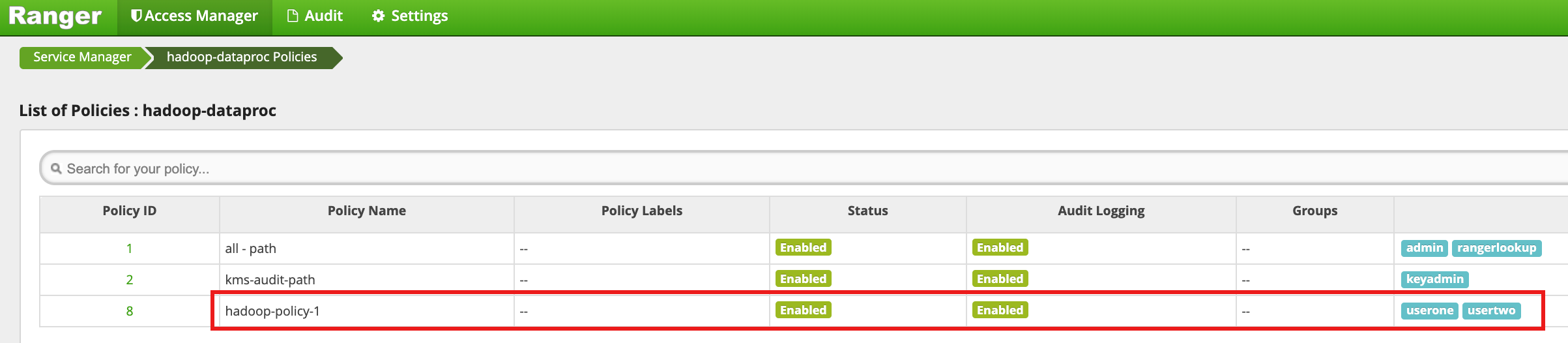

Klik Tambahkan untuk menyimpan kebijakan. Kebijakan ini tercantum di halaman hadoop-dataproc Policies:

Akses direktori

/tmpHDFS sebagai userone:userone@example-cluster-m:~$ hadoop fs -ls /tmp

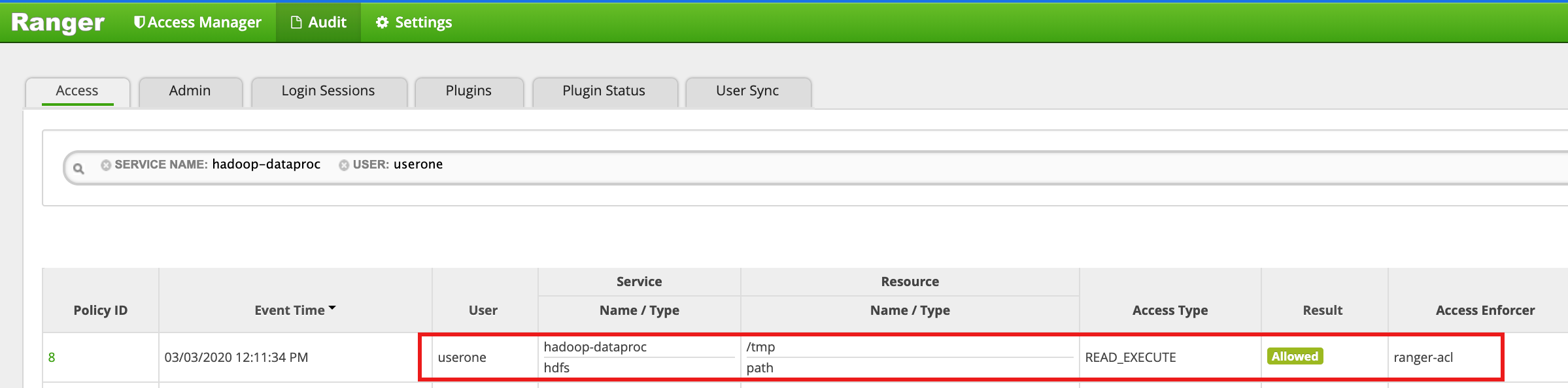

- UI Ranger menunjukkan bahwa

useronediizinkan mengakses direktori /tmp HDFS.

- UI Ranger menunjukkan bahwa

Akses direktori HDFS

/tmpsebagaiusertwo:usertwo@example-cluster-m:~$ hadoop fs -ls /tmp

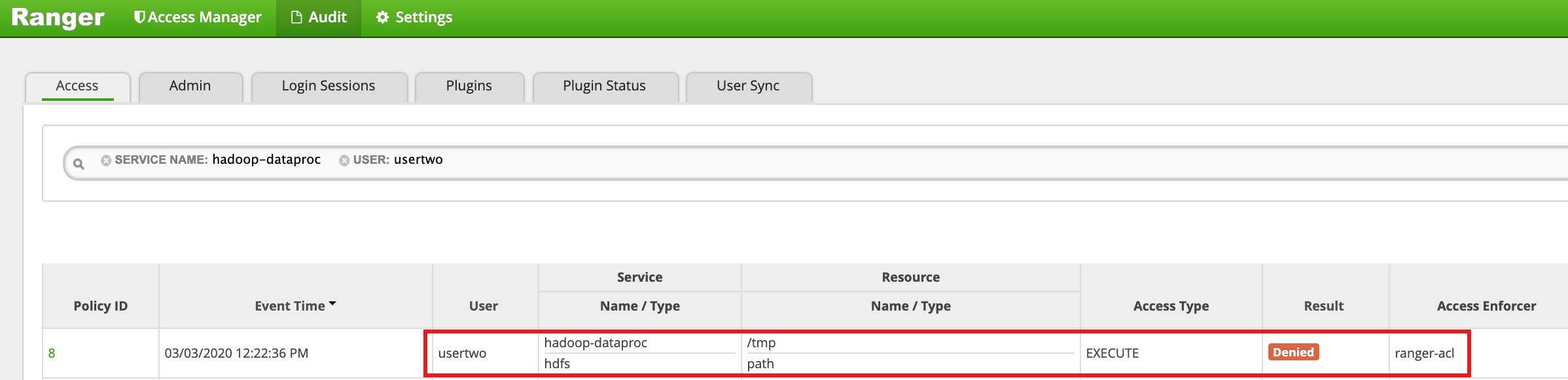

- UI Ranger menunjukkan bahwa

usertwoditolak aksesnya ke direktori /tmp HDFS.

- UI Ranger menunjukkan bahwa

Kebijakan akses Hive

Contoh ini membuat kebijakan Ranger untuk mengizinkan dan menolak akses pengguna ke tabel Hive.

Buat tabel

employeekecil menggunakan CLI hive di instance master.hive> CREATE TABLE IF NOT EXISTS employee (eid int, name String); INSERT INTO employee VALUES (1 , 'bob') , (2 , 'alice'), (3 , 'john');

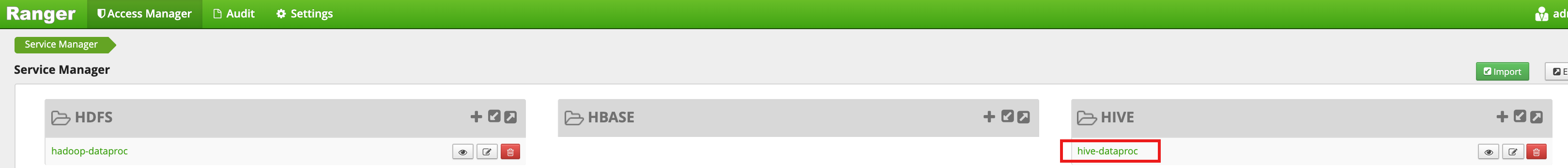

Pilih

hive-dataprocdari UI Admin Ranger.

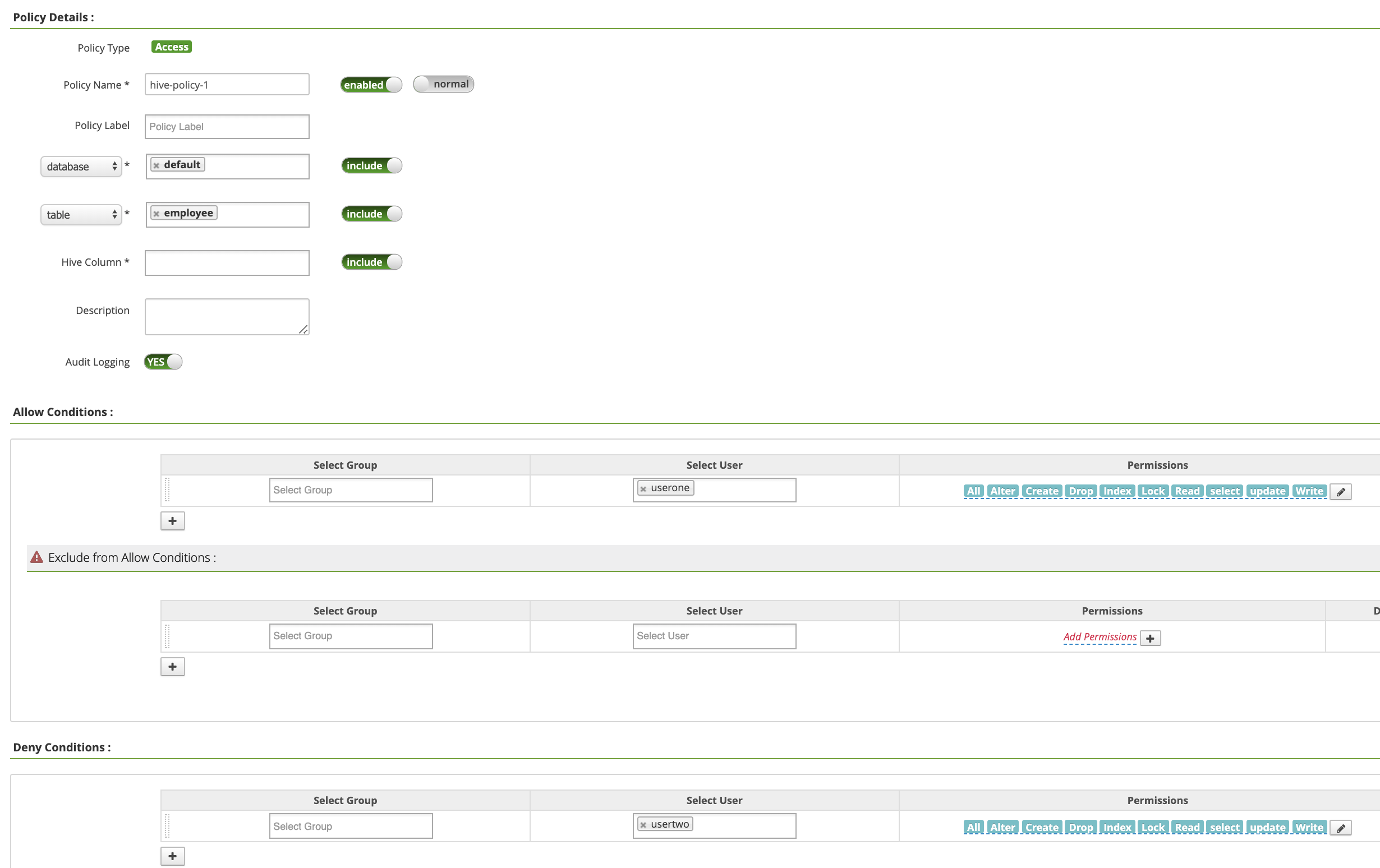

Di halaman hive-dataproc Policies, klik Add New Policy. Di halaman Create Policy, kolom berikut diisi atau dipilih:

Policy Name: "hive-policy-1"database: "default"table: "employee"Hive Column: "*"Audit Logging: "Yes" (Ya)Allow Conditions:Select User: "userone"Permissions: "Pilih Semua" untuk memberikan semua izin

Deny Conditions:Select User: "usertwo"Permissions: "Pilih Semua" untuk menolak semua izin

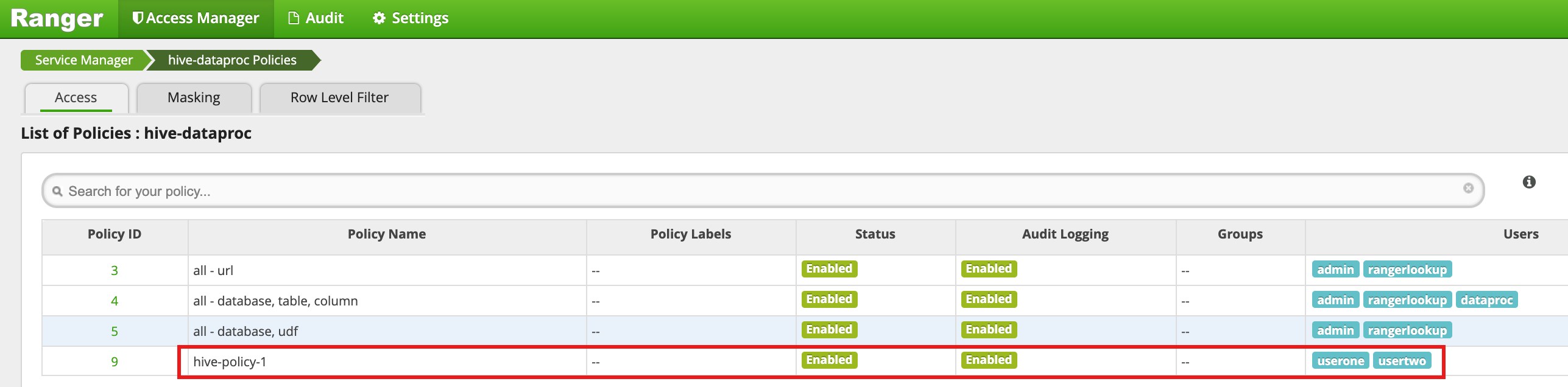

Klik Tambahkan untuk menyimpan kebijakan. Kebijakan tercantum di halaman hive-dataproc Policies:

Jalankan kueri dari sesi SSH master VM terhadap tabel karyawan Hive sebagai userone:

userone@example-cluster-m:~$ beeline -u "jdbc:hive2://$(hostname -f):10000/default;principal=hive/$(hostname -f)@REALM" -e "select * from employee;"

- Kueri userone berhasil:

Connected to: Apache Hive (version 2.3.6) Driver: Hive JDBC (version 2.3.6) Transaction isolation: TRANSACTION_REPEATABLE_READ +---------------+----------------+ | employee.eid | employee.name | +---------------+----------------+ | 1 | bob | | 2 | alice | | 3 | john | +---------------+----------------+ 3 rows selected (2.033 seconds)

- Kueri userone berhasil:

Jalankan kueri dari sesi SSH master VM terhadap tabel karyawan Hive sebagai usertwo:

usertwo@example-cluster-m:~$ beeline -u "jdbc:hive2://$(hostname -f):10000/default;principal=hive/$(hostname -f)@REALM" -e "select * from employee;"

- usertwo ditolak aksesnya ke tabel:

Error: Could not open client transport with JDBC Uri: ... Permission denied: user=usertwo, access=EXECUTE, inode="/tmp/hive"

- usertwo ditolak aksesnya ke tabel:

Akses Hive yang Sangat Terperinci

Ranger mendukung Pemaskeran dan Filter Tingkat Baris di Hive. Contoh ini dibuat berdasarkan hive-policy-1 sebelumnya dengan menambahkan kebijakan masking dan filter.

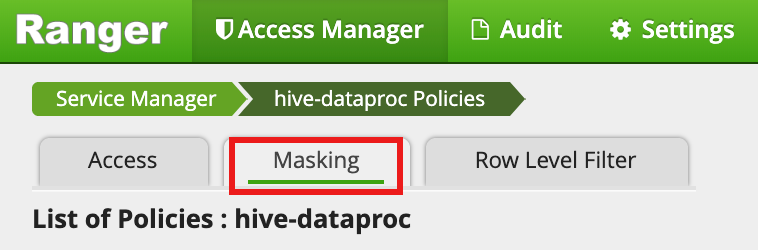

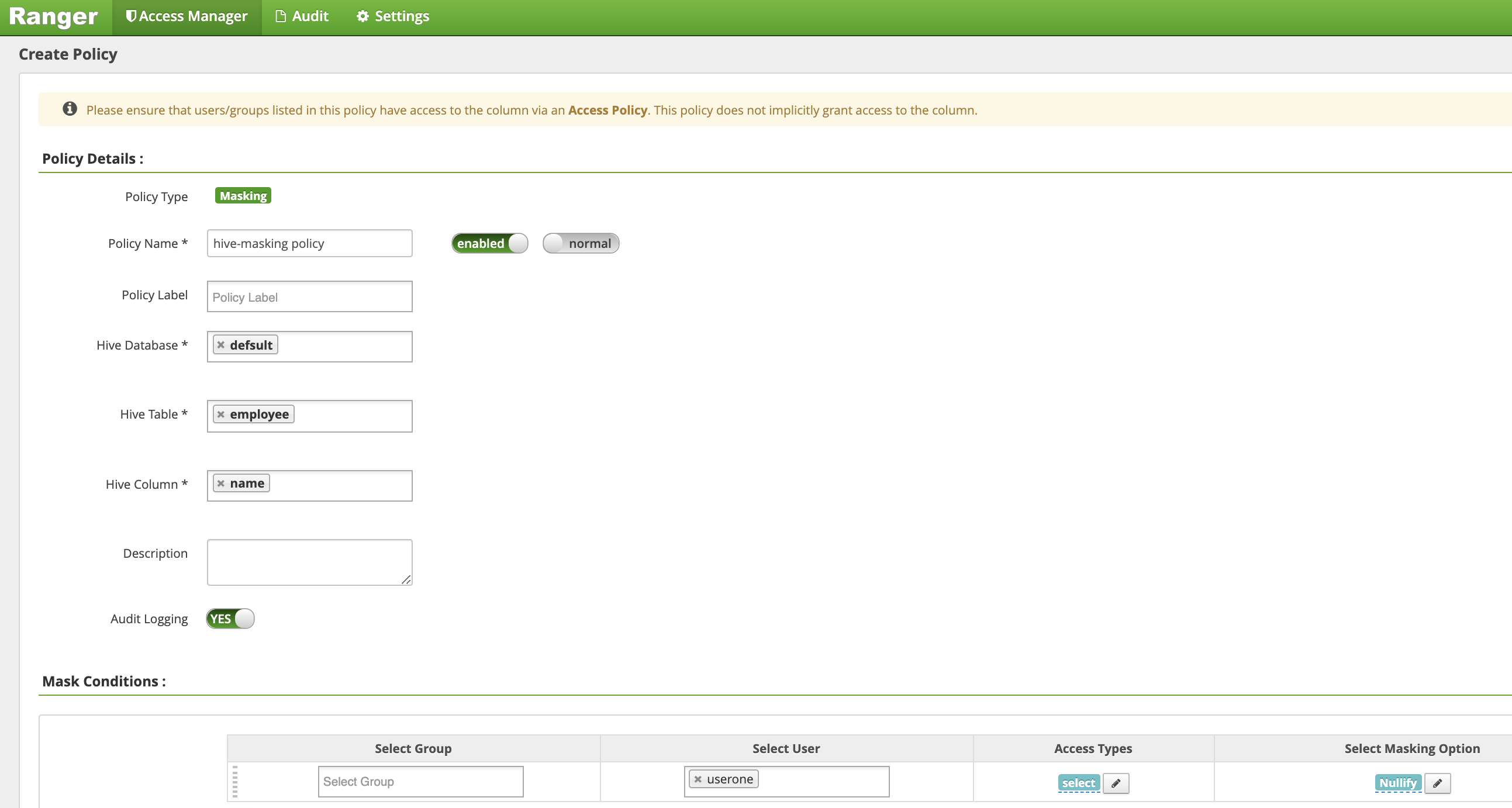

Pilih

hive-dataprocdari UI Admin Ranger, lalu pilih tab Masking dan klik Add New Policy.

Di halaman Buat Kebijakan, kolom berikut diisi atau dipilih untuk membuat kebijakan guna menyamarkan (membatalkan) kolom nama karyawan.:

Policy Name: "hive-masking policy" (kebijakan penyamaran Hive)database: "default"table: "employee"Hive Column: "name"Audit Logging: "Yes" (Ya)Mask Conditions:Select User: "userone"Access Types: Izin menambahkan/mengedit "pilih"Select Masking Option: "nullify"

Klik Tambahkan untuk menyimpan kebijakan.

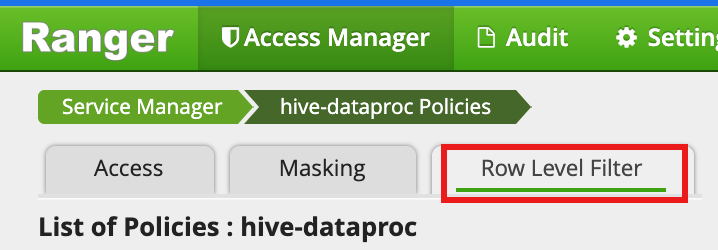

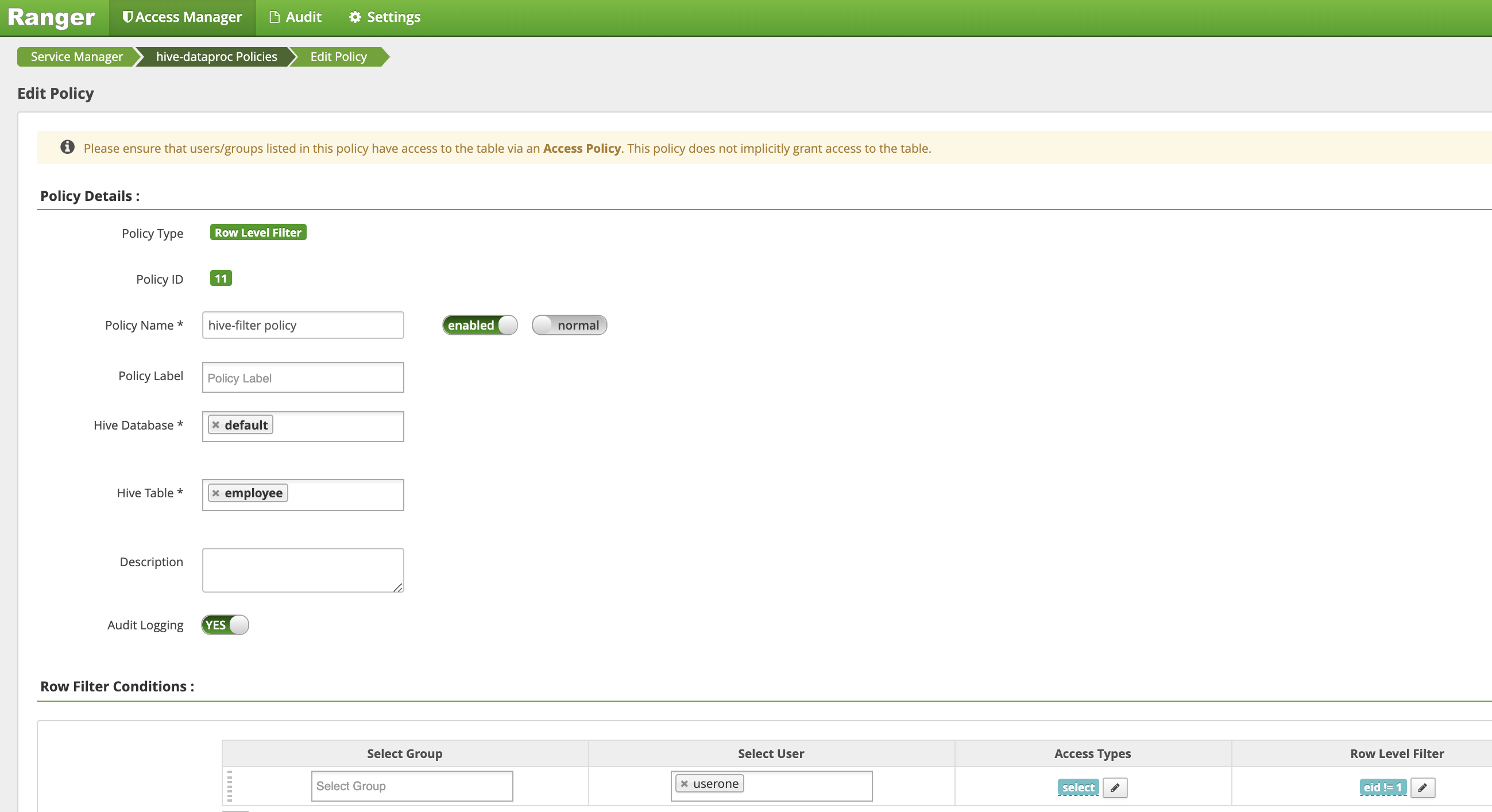

Pilih

hive-dataprocdari UI Admin Ranger, lalu pilih tab Row Level Filter dan klik Add New Policy.

Di halaman Buat Kebijakan, kolom berikut diisi atau dipilih untuk membuat kebijakan guna memfilter (menampilkan) baris saat

eidtidak sama dengan1:Policy Name: "hive-filter policy"Hive Database: "default"Hive Table: "employee"Audit Logging: "Yes" (Ya)Mask Conditions:Select User: "userone"Access Types: Izin menambahkan/mengedit "pilih"Row Level Filter: Ekspresi filter "eid != 1"

Klik Tambahkan untuk menyimpan kebijakan.

Ulangi kueri sebelumnya dari sesi SSH master VM terhadap tabel karyawan Hive sebagai userone:

userone@example-cluster-m:~$ beeline -u "jdbc:hive2://$(hostname -f):10000/default;principal=hive/$(hostname -f)@REALM" -e "select * from employee;"

- Kueri akan ditampilkan dengan kolom nama yang disamarkan dan bob

(eid=1) difilter dari hasil.:

Transaction isolation: TRANSACTION_REPEATABLE_READ +---------------+----------------+ | employee.eid | employee.name | +---------------+----------------+ | 2 | NULL | | 3 | NULL | +---------------+----------------+ 2 rows selected (0.47 seconds)

- Kueri akan ditampilkan dengan kolom nama yang disamarkan dan bob

(eid=1) difilter dari hasil.: