Langkah 1: Buat beban kerja

Halaman ini memandu Anda melalui langkah awal penyiapan fondasi data, inti dari Cortex Framework. Dibangun di atas penyimpanan BigQuery, fondasi data mengatur data masuk Anda dari berbagai sumber. Data yang teratur ini menyederhanakan analisis dan penerapannya dalam pengembangan AI.

Menyiapkan integrasi data

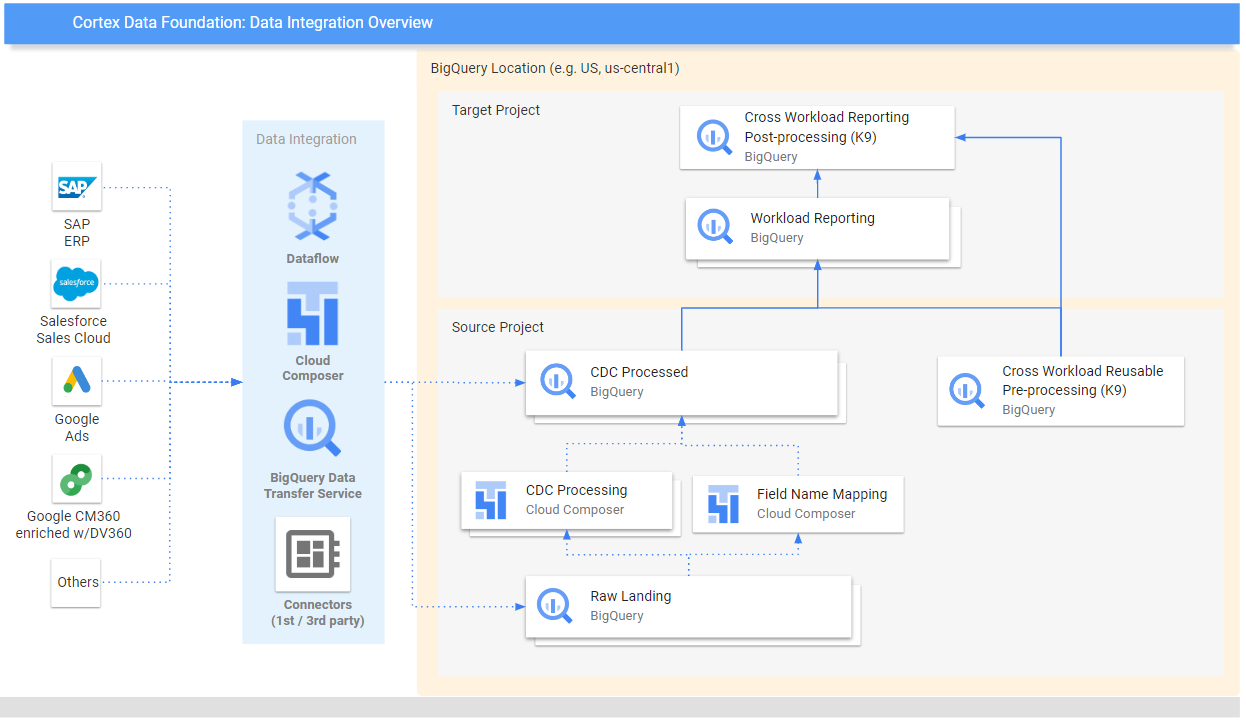

Mulai dengan menentukan beberapa parameter utama yang akan berfungsi sebagai cetak biru untuk mengatur dan menggunakan data Anda secara efisien dalam Cortex Framework. Ingat, parameter ini dapat bervariasi bergantung pada workload tertentu, alur data yang Anda pilih, dan mekanisme integrasi. Diagram berikut memberikan ringkasan integrasi data dalam Fondasi Data Cortex Framework:

Tentukan parameter berikut sebelum deployment untuk pemanfaatan data yang efisien dan efektif dalam Cortex Framework.

Project

- Project sumber: Project tempat data mentah Anda berada. Anda memerlukan setidaknya satu project untuk menyimpan data dan menjalankan proses deployment. Google Cloud

- Project target (opsional): Project tempat Cortex Framework Data Foundation menyimpan model data yang diprosesnya. Project ini dapat sama dengan project sumber, atau berbeda, bergantung pada kebutuhan Anda.

Jika Anda ingin memiliki kumpulan project dan set data terpisah untuk setiap beban kerja (misalnya, satu set project sumber dan target untuk SAP dan set project target dan sumber yang berbeda untuk Salesforce), jalankan deployment terpisah untuk setiap beban kerja. Untuk mengetahui informasi selengkapnya, lihat Menggunakan project yang berbeda untuk memisahkan akses di bagian langkah opsional.

Model data

- Deploy Model: Pilih apakah Anda perlu men-deploy model untuk semua beban kerja atau hanya satu set model (misalnya, SAP, Salesforce, dan Meta). Untuk mengetahui informasi selengkapnya, lihat Sumber data dan workload yang tersedia.

Set data BigQuery

- Set Data Sumber (Mentah): Set data BigQuery tempat data sumber direplikasi atau tempat data pengujian dibuat. Sebaiknya gunakan set data terpisah, satu untuk setiap sumber data. Misalnya, satu set data mentah untuk SAP dan satu set data mentah untuk Google Ads. Set data ini termasuk dalam project sumber.

- Set Data CDC: Set data BigQuery tempat data yang diproses CDC mendarat dengan data terbaru yang tersedia. Beberapa workload memungkinkan pemetaan nama kolom. Sebaiknya buat set data CDC terpisah untuk setiap sumber. Misalnya, satu set data CDC untuk SAP, dan satu set data CDC untuk Salesforce. Set data ini termasuk dalam project sumber.

- Set Data Pelaporan Target: Set data BigQuery tempat model data standar Data Foundation di-deploy. Sebaiknya buat set data pelaporan terpisah untuk setiap sumber. Misalnya, satu set data pelaporan untuk SAP dan satu set data pelaporan untuk Salesforce. Set data ini dibuat secara otomatis selama deployment jika belum ada. Set data ini termasuk dalam project Target.

- Memproses Kumpulan Data K9: Kumpulan data BigQuery tempat komponen DAG lintas beban kerja yang dapat digunakan kembali, seperti dimensi

time, dapat di-deploy. Beban kerja memiliki dependensi pada set data ini kecuali jika dimodifikasi. Set data ini dibuat secara otomatis selama deployment jika belum ada. Set data ini termasuk dalam project sumber. - Pemrosesan pasca-Kumpulan Data K9: Kumpulan data BigQuery tempat pelaporan lintas beban kerja dan DAG sumber eksternal tambahan (misalnya, penyerapan Google Trends) dapat di-deploy. Set data ini dibuat secara otomatis selama deployment jika belum ada. Set data ini termasuk dalam project Target.

Opsional: Buat contoh data

Cortex Framework dapat membuat contoh data dan tabel untuk Anda jika Anda tidak memiliki akses ke data Anda sendiri, atau alat replikasi untuk menyiapkan data, atau bahkan jika Anda hanya ingin melihat cara kerja Cortex Framework. Namun, Anda tetap perlu membuat dan mengidentifikasi set data CDC dan Raw terlebih dahulu.

Buat set data BigQuery untuk data mentah dan CDC per sumber data, dengan petunjuk berikut.

Konsol

Buka halaman BigQuery di konsol Google Cloud .

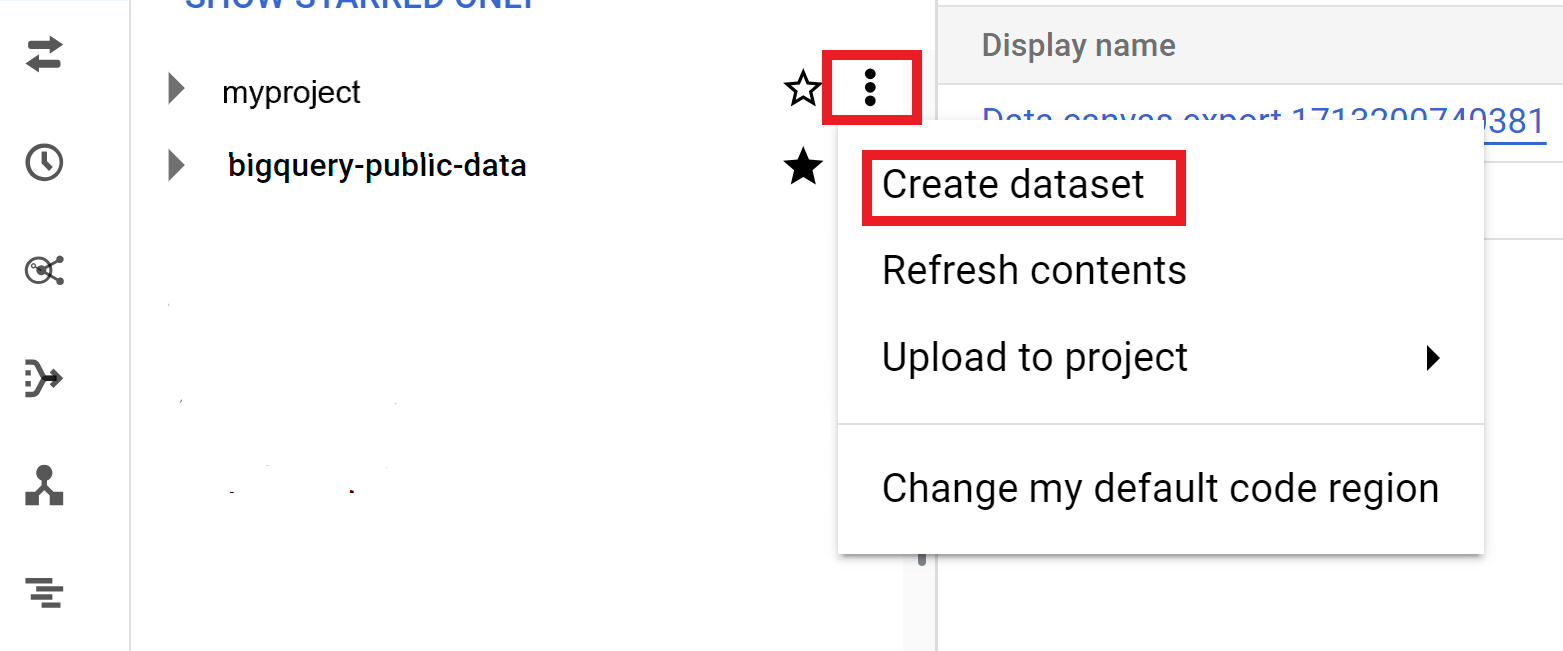

Di panel Explorer, pilih project tempat Anda ingin membuat set data.

Luaskan opsi Actions, lalu klik Create dataset:

Di halaman Create dataset:

- Untuk ID Set Data, masukkan nama set data yang unik.

Untuk Location type, pilih lokasi geografis untuk set data. Setelah set data dibuat, lokasi tidak dapat diubah.

Opsional. Untuk mengetahui detail penyesuaian selengkapnya untuk set data Anda, lihat Membuat set data: Konsol.

Klik Create dataset.

BigQuery

Buat set data baru untuk data mentah dengan menyalin perintah berikut:

bq --location= LOCATION mk -d SOURCE_PROJECT: DATASET_RAWGanti kode berikut:

LOCATIONdengan lokasi set data.SOURCE_PROJECTdengan ID project sumber Anda.DATASET_RAWdengan nama set data Anda untuk data mentah. Contoh,CORTEX_SFDC_RAW.

Buat set data baru untuk data CDC dengan menyalin perintah berikut:

bq --location=LOCATION mk -d SOURCE_PROJECT: DATASET_CDCGanti kode berikut:

LOCATIONdengan lokasi set data.SOURCE_PROJECTdengan ID project sumber Anda.DATASET_CDCdengan nama set data Anda untuk data CDC. Contoh,CORTEX_SFDC_CDC.

Pastikan set data telah dibuat dengan perintah berikut:

bq lsOpsional. Untuk mengetahui informasi selengkapnya tentang cara membuat set data, lihat Membuat set data.

Langkah berikutnya

Setelah Anda menyelesaikan langkah ini, lanjutkan ke langkah-langkah deployment berikut:

- Menetapkan workload (halaman ini).

- Clone repositori.

- Tentukan mekanisme integrasi.

- Menyiapkan komponen.

- Konfigurasi deployment.

- Jalankan deployment.