This page describes how to import into an AlloyDB database a DMP

file created by the pg_dump tool using the custom or directory format.

To import a file created by the pg_dump tool using the plain format, see

Import a SQL file.

The procedure to perform the import involves these tasks:

Upload the DMP file to a Cloud Storage bucket.

Prepare a client host to perform the import operation.

Import the DMP file into the database.

Clean up the resources created to perform the procedure.

Before you begin

- You must have the Owner (

roles/owner) or Editor (roles/editor) basic IAM role in the Google Cloud project you are using, or you must have these predefined IAM roles:- AlloyDB Admin (

roles/alloydb.admin) or AlloyDB Viewer (roles/alloydb.viewer) - Storage Admin (

roles/storage.admin) - Compute Instance Admin (v1) (

roles/compute.instanceAdmin.v1)

- AlloyDB Admin (

Upload the DMP file

To upload the DMP file, you create a Cloud Storage bucket and then upload the DMP file to that bucket.

Create a standard storage, regional storage bucket in the project and region where your AlloyDB database is located.

Upload the DMP file to the storage bucket you created.

Prepare a client host

To prepare a client host to perform the import operation, you create a

Compute Engine VM that can connect to the AlloyDB primary

instance where your database is located, and install the pg_restore tool and

the Google Cloud CLI on that VM.

Follow the instructions Connect a psql client to an instance to create a Compute Engine VM with the proper connectivity and the

pg_restoretool installed. When following these instructions, make sure to allocate enough local storage to the Compute Engine VM to accommodate the DMP file you are importing.Install the gcloud CLI to provide command-line access to the DMP file in the Cloud Storage bucket.

Import the DMP file

To import the DMP file, you get the IP address of the AlloyDB

primary instance where your database is located and then use the pg_restore

tool to import the file into the database.

- Get the IP address of the AlloyDB primary instance where your database is located by viewing its details.

- SSH into the Compute Engine VM.

Console

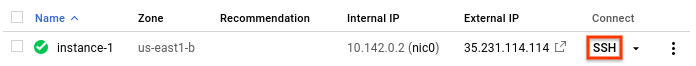

- In the Google Cloud console, go to the VM instances page.

- In the list of virtual machine instances, click SSH in

the row of the instance you created.

gcloud

Use the

gcloud compute sshcommand to connect to the instance you created.gcloud compute ssh --project=PROJECT_ID --zone=ZONE VM_NAME

Replace the following:

PROJECT_ID: The ID of the project that contains the instance.ZONE: The name of the zone in which the instance is located.VM_NAME: The name of the instance.

- Copy the DMP file to the client host's local file system:

gcloud storage cp gs://BUCKET_NAME/DMP_FILE_NAME .

- Run the following command to create a TOC file that comments out all

EXTENSIONstatements:pg_restore \ -l DMP_FILE_NAME | sed -E 's/(.* EXTENSION )/; \1/g' > TOC_FILE_NAME

DMP_FILE_NAME: The DMP file on the local file system.TOC_FILE_NAME: Provide a file name for the TOC file to create on the local file system.

- Import the DMP file:

pg_restore -h IP_ADDRESS -U postgres \ -d DB_NAME \ -L TOC_FILE_NAME \ DMP_FILE_NAME

IP_ADDRESS: The IP address of the primary instance.DB_NAME: The name of the database to import into.TOC_FILE_NAME: The TOC file you created in the previous step.DMP_FILE_NAME: The DMP file.

The

pg_restorecommand provides several additional options to control the data import operation.

Clean up resources

After successfully importing the DMP file, you can delete the Cloud Storage bucket and delete the Compute Engine VM you used during the import procedure.