神经架构搜索空间是实现良好性能的关键。

它定义了要探索和搜索的所有潜在架构或参数。神经架构搜索在 search_spaces.py 文件中提供了一组默认搜索空间:

MnasnetEfficientnet_v2NasfpnSpinenetSpinenet_v2Spinenet_mbconvSpinenet_scalingRandaugment_detectionRandaugment_segmentationAutoAugmentation_detectionAutoAugmentation_segmentation

此外,我们还提供以下搜索空间示例:

Lidar 笔记本会在笔记本中发布验证结果。PyTorch 搜索空间代码的其余部分仅用作示例,而不用于基准化分析。

每个搜索空间都有特定的使用场景:

- MNasNet 搜索空间用于图片分类和对象检测任务,基于 MobileNetV2 架构。

- EfficientNetV2 搜索空间用于对象检测任务。EfficientNetV2 添加了 Fused-MBCOMM 等新操作。如需了解详情,请参阅 EfficientNetV2 论文。

- NAS-FPN 搜索空间通常用于对象检测。 您可以在此部分中找到详细说明。

搜索空间的 SpineNet 系列包括

spinenet、spinenet_v2、spinenet_mbconv和spinenet_scaling。它们通常也用于对象检测。您可以在此部分中找到 SpineNet 的详细说明。spinenet是此系列中的基本搜索空间,在搜索期间同时提供残差和瓶颈块候选项。spinenet_v2提供较小的spinenet版本,这可能有助于更快地收敛,从而在搜索期间仅提供瓶颈块候选项。spinenet_mbconv为移动平台提供spinenet版本,并在搜索期间使用 mbconv 块候选项。spinenet_scaling通常在通过使用spinenet搜索空间找到合适的架构后使用,从而对该架构进行扩容或缩容以满足延迟时间要求。此搜索是通过图片大小、过滤条件数量、过滤条件大小和块重复次数等内容进行的。

利用 RandAugment 和 AutoAugment 搜索空间,您可以分别为检测和细分搜索最佳数据增强操作。注意:数据增强通常在已搜索到合适的模型后使用。您可以在此部分中找到 DataAugmentation 的详细说明。

3D 点云的 Lidar 搜索空间展示了特征生成器、骨干网、解码器和检测头上的端到端搜索。

PyTorch 3D 医学图像分割搜索空间示例展示了 UNet 编码器和 UNet 解码器上的搜索。

在大多数情况下,这些默认的搜索空间就足够了。但是,如果需要,您可以自定义这些现有搜索空间,或根据需要使用 PyGlove 库添加新的搜索空间。请查看示例代码以指定 NAS-FPN 搜索空间。

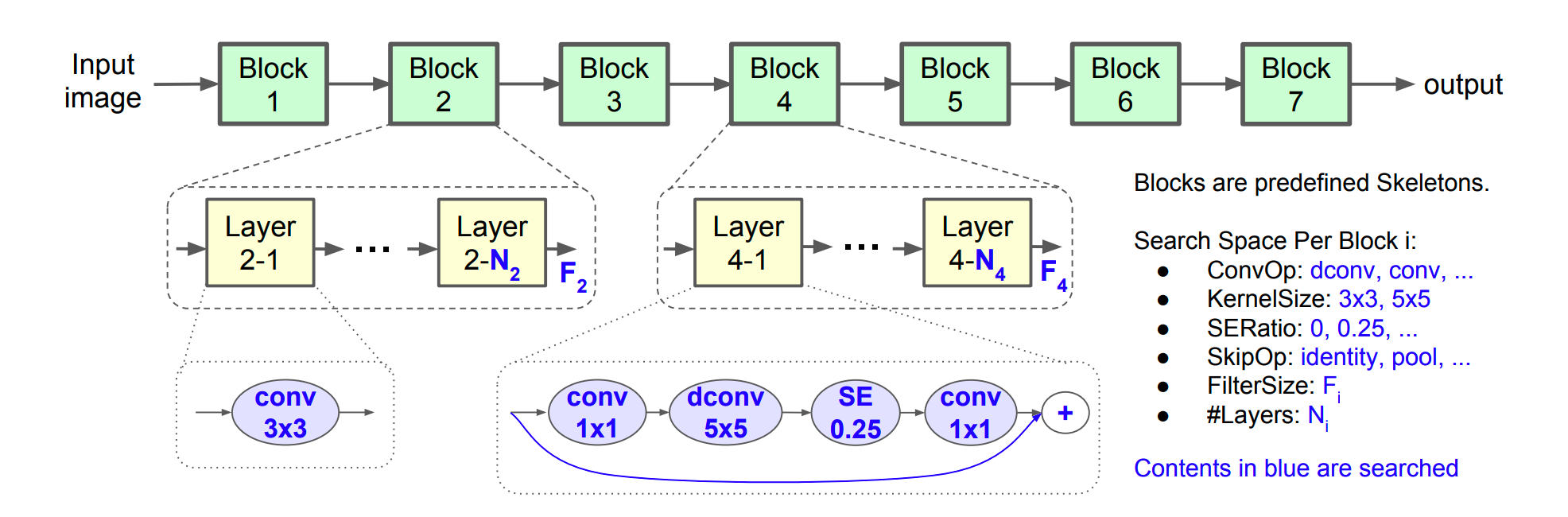

MNasnet 和 EfficientNetV2 搜索空间

MNasNet 和 EfficientV2 搜索空间定义了不同的 backbone 构建选项,例如 ConvOps、KernelSize 和 ChannelSize。backbone 可用于分类和检测等不同任务。

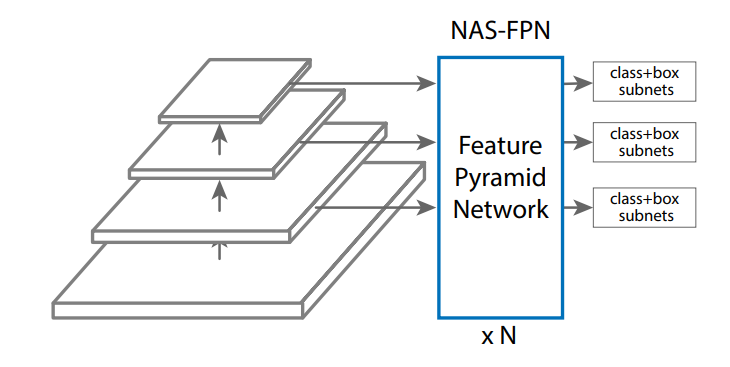

NAS-FPN 搜索空间

NAS-FPN 搜索空间定义了 FPN 层中的搜索空间,该层用于连接不同级别的特征以进行对象检测,如下图所示。

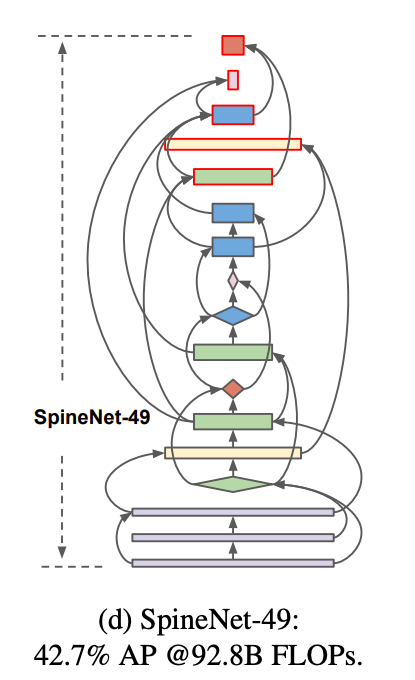

SpineNet 搜索空间

借助 SpineNet 搜索空间,您可以搜索具有 scale-permuted intermediate 特征和 cross-scale 连接的骨干网,从而在 COCO 上实现单阶段对象检测器的领先性能,减少 60% 的计算量,并且 SpineNet 搜索空间性能超过 ResNet-FPN 对应搜索空间 6% AP。以下是搜索的 SpineNet-49 架构中的骨干层的连接。

数据增强搜索空间

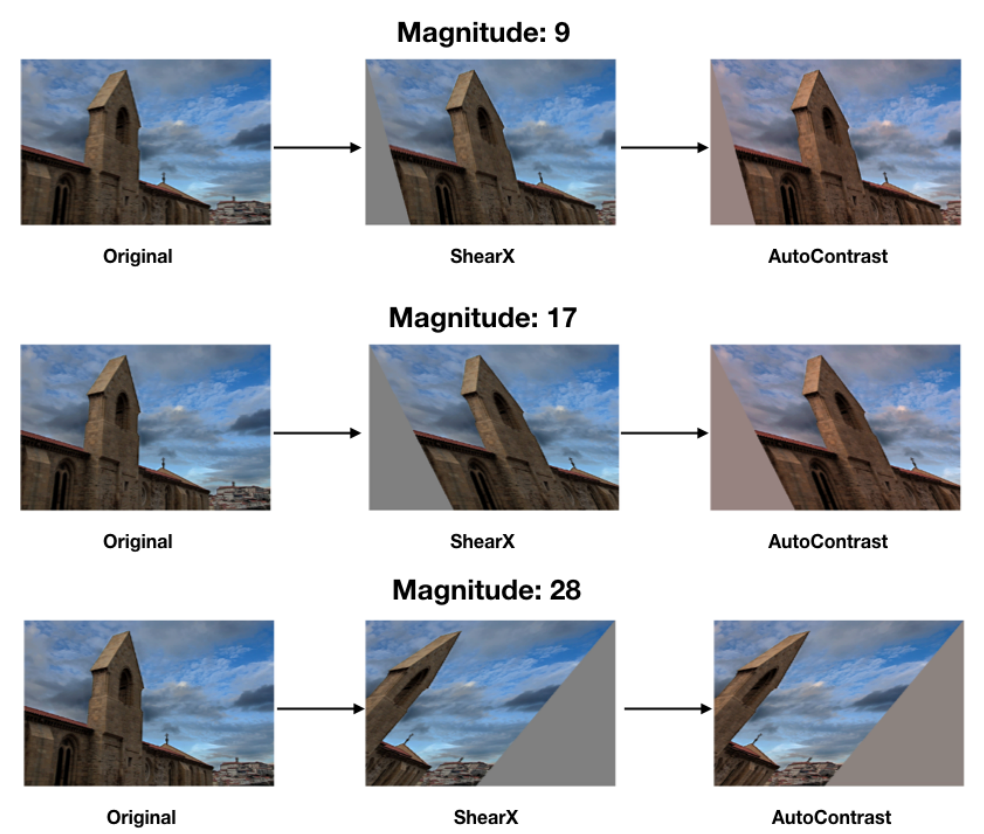

搜索最佳架构后,您还可以搜索最佳数据增强政策。数据增强可以进一步提高之前搜索到的架构的准确率。

神经架构搜索平台为以下两个任务提供了 RandAugment 和 AutoAugment 增强搜索空间:(a) randaugment_detection,用于对象检测,(b) randaugment_segmentation,用于细分。NAS 平台会在内部从要应用于训练数据的增强操作(如自动调整对比度、剪切、旋转)列表之间进行选择。

RandAugment 搜索空间

RandAugment 搜索空间由两个参数配置:(a) N(应用于图片的连续增强操作的数量)和 (b) M(所有这些操作的幅度)。例如,下图展示了具有不同 M=magnitude(幅度)的 M=2 的操作(剪切和对比)应用图片的示例。

对于 N 的给定值,系统会从操作库随机选择操作列表。增强搜索可找到现有训练作业的 N 和 M 的最佳值。搜索不使用代理任务,因此会一直运行训练作业运行到结束。

AutoAugment 搜索空间

AutoAutoment 搜索空间可让您搜索 choice、magnitude 和 probability 操作,以优化模型训练。借助 AutoAugment 搜索空间,您可以搜索政策选项,而 RandAugment 不支持这些选项。