第 3 步:确定集成机制

本页介绍了部署 Cortex Framework 数据基础(Cortex Framework 的核心)的第三步。在此步骤中,您需要配置与所选数据源的集成。如果您使用的是示例数据,请跳过此步骤。

集成概览

Cortex Framework 可帮助您集中管理来自各种来源的数据以及其他平台的数据。这样一来,您的数据就有了单一可信来源。Cortex Data Foundation 以不同的方式与每个数据源集成,但大多数都遵循类似的流程:

- 从来源到原始层:使用 API 将数据从数据源注入到原始数据集。这是通过使用由 Cloud Composer DAG 触发的 Dataflow 流水线来实现的。

- 从原始层到 CDC 层:对原始数据集应用 CDC 处理,并将输出存储在 CDC 数据集中。这是通过运行 BigQuery SQL 的 Cloud Composer DAG 来实现的。

- 从 CDC 层到报告层:根据报告数据集中的 CDC 表创建最终报告表。这是通过以下方式实现的:在 CDC 表之上创建运行时视图,或运行 Cloud Composer DAG 以实现 BigQuery 表中的数据具体化,具体取决于配置方式。 如需详细了解配置,请参阅自定义报告设置文件。

config.json 文件用于配置连接到数据源所需的设置,以便从各种工作负载转移数据。如需了解每个数据源的集成选项,请参阅以下资源。

- 运营:

- 营销:

- 可持续性:

如需详细了解每个数据源支持的实体关系图,请参阅 Cortex Framework Data Foundation 代码库中的 docs 文件夹。

K9 部署

K9 部署程序可简化各种数据源的集成。K9 部署程序是 BigQuery 环境中的一个预定义数据集,负责提取、处理和建模可在不同数据源中重复使用的组件。

例如,time 维度可在所有数据源中重复使用,这些数据源中的表可能需要根据公历获取分析结果。K9 部署程序会将天气或 Google 趋势等外部数据与其他数据源(例如 SAP、Salesforce、营销)相结合。借助这个丰富的数据集,您可以获得更深入的洞见并进行更全面的分析。

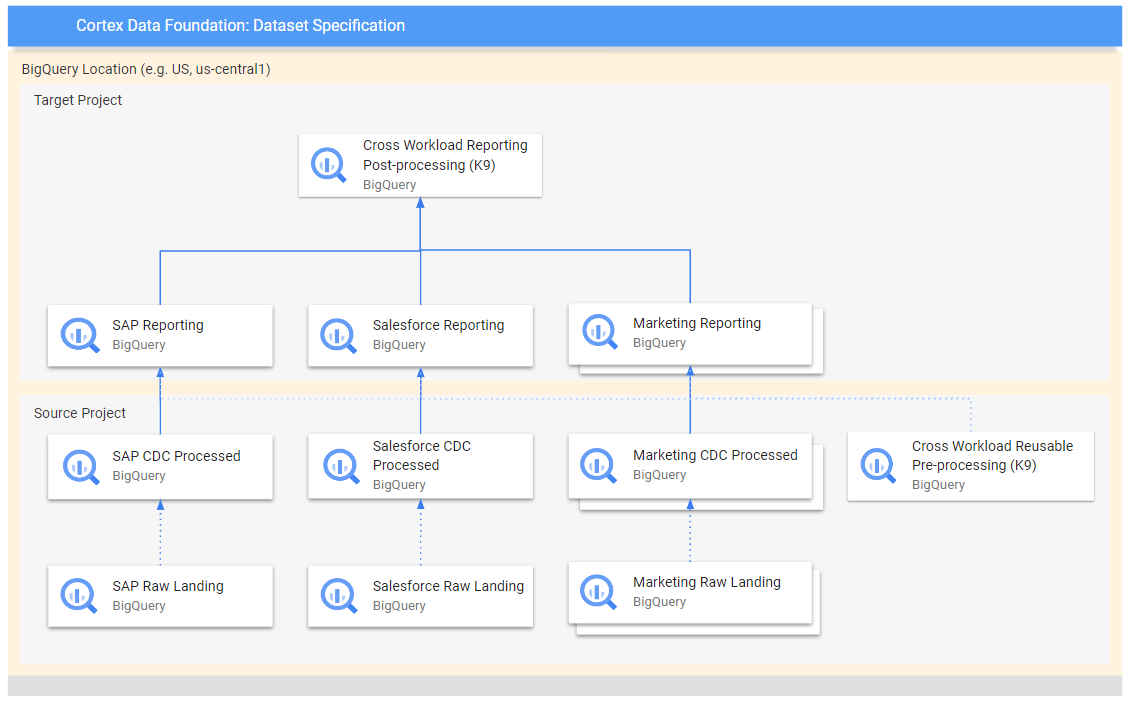

下图显示了数据从不同原始来源流向各个报告层的过程:

在该图中,源项目包含来自所选数据源(SAP、Salesforce 和 Marketing)的原始数据。而目标项目包含从变更数据捕获 (CDC) 流程派生的已处理数据。

预处理 K9 步骤会在所有工作负载开始部署之前运行,因此可重用模型在部署期间可用。此步骤可转换来自各种来源的数据,以创建一致且可重复使用的数据集。

后处理 K9 步骤在所有工作负载都部署了其报告模型后进行,以实现跨工作负载报告或增强模型,从而在每个单独的报告数据集中找到必要的依赖项。

配置 K9 部署

在 K9 清单文件中配置要生成的有向无环图 (DAG) 和模型。

K9 预处理步骤非常重要,因为它可以确保数据流水线中的所有工作负载都能访问以一致方式准备的数据。这样可以减少冗余并确保数据一致性。

如需详细了解如何为 K9 配置外部数据集,请参阅为 K9 配置外部数据集。

后续步骤

完成此步骤后,请继续执行以下部署步骤: