本页面介绍了如何从 Dataproc Metastore 导出元数据。

借助导出元数据功能,您可以将元数据保存为可移植的存储格式。

导出数据后,您可以将元数据导入到另一个 Dataproc Metastore 服务或自行管理的 Hive Metastore (HMS)。

导出元数据简介

从 Dataproc Metastore 导出元数据时,该服务会将数据存储为以下文件格式之一:

- 存储在文件夹中的一组 Avro 文件。

- 存储在 Cloud Storage 文件夹中的单个 MySQL 转储文件。

Avro

基于 Avro 的导出仅支持 2.3.6 版和 3.1.2 版。导出 Avro 文件时,Dataproc Metastore 会为数据库中的每个表创建一个 <table-name>.avro 文件。

如需导出 Avro 文件,您的 Dataproc Metastore 服务可以使用 MySQL 或 Spanner 数据库类型。

MySQL

所有版本的 Hive 都支持基于 MySQL 的导出。导出 MySQL 文件时,Dataproc Metastore 会创建一个包含所有表信息的 SQL 文件。

如需导出 MySQL 文件,您的 Dataproc Metastore 服务必须使用 MySQL 数据库类型。Spanner 数据库类型不支持 MySQL 导入。

准备工作

所需的角色

如需获得将元数据导出到 Dataproc Metastore 所需的权限,请让您的管理员为您授予以下 IAM 角色:

-

如需导出元数据,请执行以下任一操作:

-

<x0A> <x0A>

-

Dataproc Metastore 编辑器 (

roles/metastore.editor) 在 Dataproc Metastore 服务上 -

Dataproc Metastore 管理员 (

roles/metastore.admin) 对 Dataproc Metastore 服务的权限 -

Dataproc Metastore 服务上的 Dataproc Metastore Metadata Operator (

roles/metastore.metadataOperator)

-

Dataproc Metastore 编辑器 (

-

对于 MySQL 和 Avro,如需使用 Cloud Storage 对象进行导出,请执行以下操作:

向您的用户账号和 Dataproc Metastore 服务代理授予 Cloud Storage 存储桶的 Storage Creator 角色 (

roles/storage.objectCreator)

如需详细了解如何授予角色,请参阅管理对项目、文件夹和组织的访问权限。

这些预定义角色可提供将元数据导出到 Dataproc Metastore 所需的权限。如需查看所需的确切权限,请展开所需权限部分:

所需权限

如需将元数据导出到 Dataproc Metastore,您需要具备以下权限:

-

如需导出元数据,请执行以下操作:

metastore.services.export在 Metastore 服务上 -

对于 MySQL 和 Avro,如需使用 Cloud Storage 对象进行导出,请向您的用户账号和 Dataproc Metastore 服务代理授予以下权限:

storage.objects.createCloud Storage 存储桶的权限

导出元数据

在导出元数据之前,请注意以下事项:

- 在导出操作处于运行状态时,您无法更新 Dataproc Metastore 服务,例如更改配置设置。不过,您仍然可以将其用于正常操作,例如使用它从关联的 Dataproc 集群或自行管理的集群访问其元数据。

- 元数据导出功能仅导出元数据。在导出操作中,系统不会复制内部表中由 Apache Hive 创建的数据。

如需从 Dataproc Metastore 服务导出元数据,请执行以下步骤。

控制台

在 Google Cloud 控制台中,打开 Dataproc Metastore 页面:

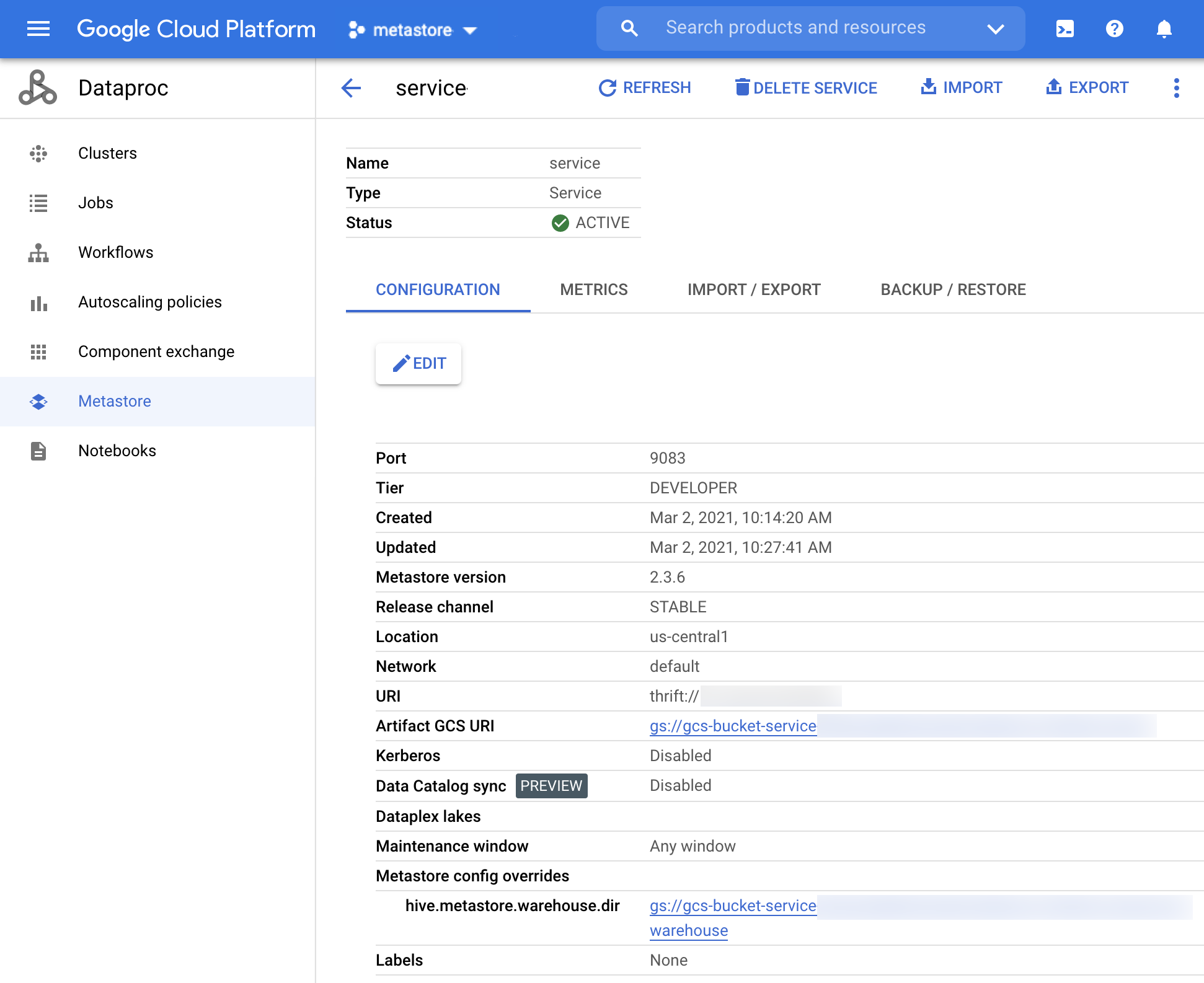

在 Dataproc Metastore 页面上,点击您要从中导出元数据的服务的名称。

服务详细信息页面会打开。

Dataproc Metastore 服务详情页面 在导航栏中,点击导出。

导出元数据页面会打开。

在目标部分中,选择 MySQL 或 Avro。

在目标 URI 字段中,点击浏览,然后选择要将文件导出到的 Cloud Storage URI。

您也可以在提供的文本字段中输入相应存储桶的位置。请使用以下格式:

bucket/object或bucket/folder/object。如需开始导出,请点击提交。

完成后,您的导出操作会显示在服务详细信息页面上导入/导出标签页的表格中。

导出操作完成后,无论是否成功,Dataproc Metastore 都会自动恢复为活跃状态。

gcloud CLI

如需从服务导出元数据,请运行以下

gcloud metastore services export gcs命令:gcloud metastore services export gcs SERVICE \ --location=LOCATION \ --destination-folder=gs://bucket-name/path/to/folder \ --dump-type=DUMP_TYPE替换以下内容:

SERVICE:Dataproc Metastore 服务的名称。LOCATION:Dataproc Metastore 服务所在的 Google Cloud 区域。bucket-name/path/to/folder:您要将导出内容存储到的 Cloud Storage 目标文件夹。DUMP_TYPE:导出操作要生成的数据库转储类型。可接受的值包括mysql和avro。 默认值为mysql。

验证导出操作是否成功。

导出操作完成后,无论是否成功,Dataproc Metastore 都会自动恢复为活跃状态。

REST

按照 API 说明使用 APIs Explorer 从服务导出元数据。

导出操作完成后,无论是否成功,服务都会自动返回到活跃状态。

查看导出历史记录

如需在Google Cloud 控制台中查看 Dataproc Metastore 服务的导出历史记录,请完成以下步骤:

- 在 Google Cloud 控制台中,打开 Dataproc Metastore 页面。

在导航栏中,点击导入/导出。

您的导出历史记录会显示在导出历史记录表格中。

历史记录最多显示最近的 25 项导出操作。

删除 Dataproc Metastore 服务还会删除所有关联的导出历史记录。

排查常见问题

一些常见问题包括:

如需有关解决常见问题排查问题的更多帮助,请参阅导入和导出错误情形。