变更数据捕获 (CDC) 处理

本页将引导您在 BigQuery 的 Google Cloud Cortex Framework 中使用变更数据捕获 (CDC)。BigQuery 旨在高效存储和分析新数据。

CDC 流程

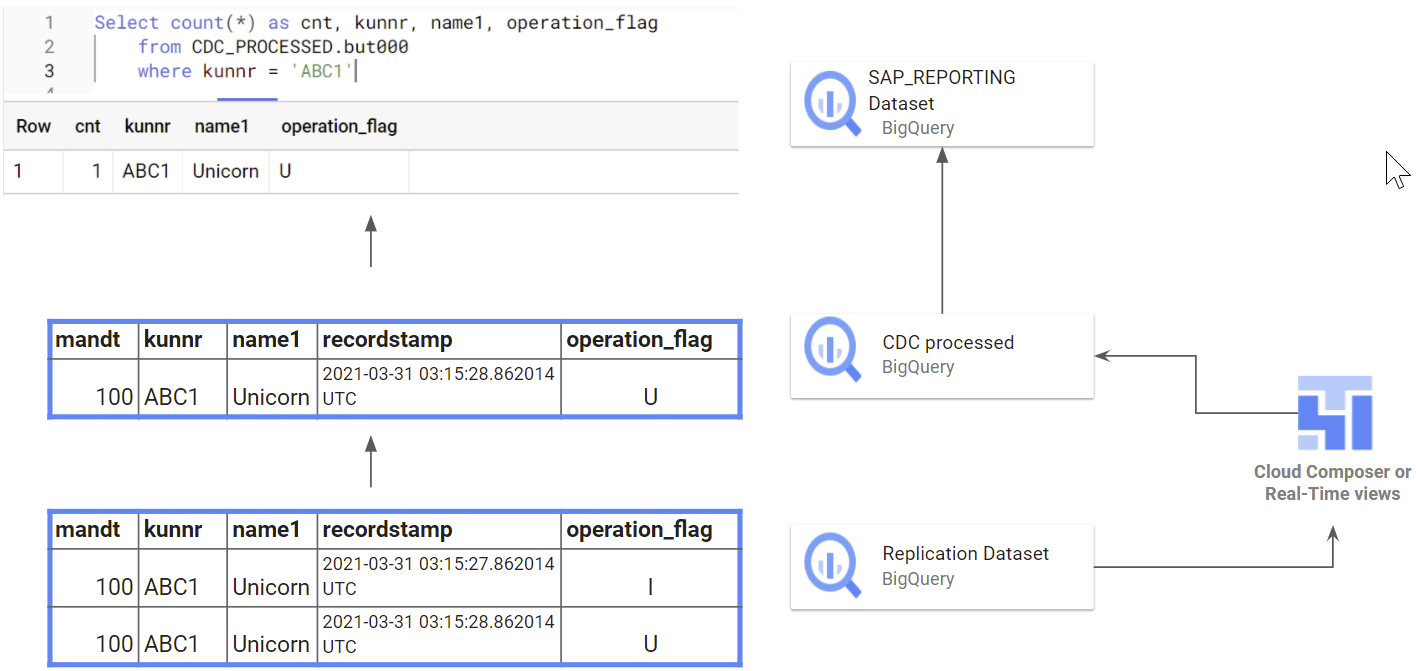

当源数据系统(例如 SAP)中的数据发生变化时,BigQuery 不会修改现有记录。而是将更新后的信息添加为新记录。为避免重复,需要之后应用合并操作。此过程称为变更数据捕获 (CDC) 处理。

Data Foundation for SAP 包含一个选项,可为 Cloud Composer 或 Apache Airflow 创建脚本,以合并或upsert更新产生的新记录,并仅在新数据集中保留最新版本。为了让这些脚本正常运行,表格需要包含一些特定字段:

operation_flag:此标志会告知脚本记录是被插入、更新还是删除的。recordstamp:此时间戳有助于识别记录的最新版本。此标志指示记录是否:- 已插入 (I)

- 已更新 (U)

- 已删除 (D)

通过利用 CDC 处理,您可以确保 BigQuery 数据准确反映源系统的最新状态。这样可以消除重复条目,为数据分析奠定可靠的基础。

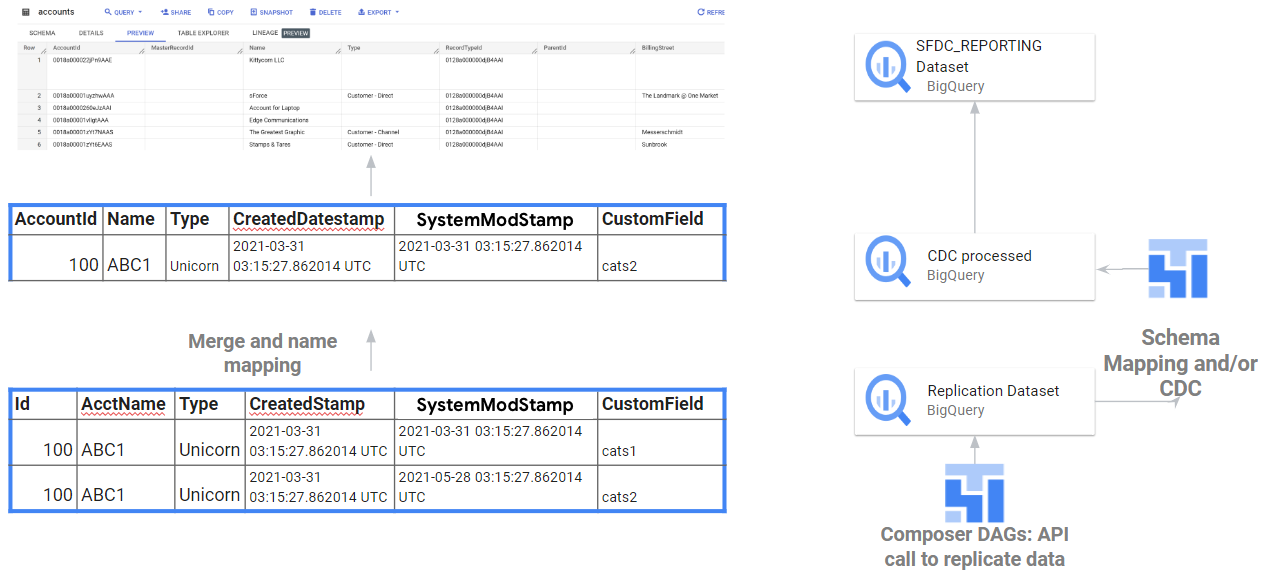

数据集结构

对于所有受支持的数据源,来自上游系统的数据会先复制到 BigQuery 数据集 (source 或 replicated dataset),然后更新或合并后的结果会插入到另一个数据集(CDC 数据集)。报告视图会从 CDC 数据集中选择数据,以确保报告工具和应用始终使用最新版本的表。

下图显示了 SAP 的 CDC 处理方式,具体取决于 operational_flag 和 recordstamp。

下图描绘了从 API 集成到 Salesforce 的原始数据和 CDC 处理,具体取决于 Salesforce API 生成的 Id 和 SystemModStamp 字段。

某些复制工具可以在将记录插入 BigQuery 时合并或更新/插入记录,因此生成这些脚本是可选的。在本例中,设置只有一个数据集。报告数据集会从该数据集提取更新后的记录以进行报告。